MMS • RSS

Article originally posted on InfoQ. Visit InfoQ

At the inaugural ChaosConf, held in San Francisco, USA, Kolton Andrus presented an evolution of chaos experimentation over the past eight years. He argued that the human and organisational aspects of dealing with failure should not be ignored, and also suggested that tooling should support application- and request-level targeting of failure injection tests in order to minimise the potential blast radius.

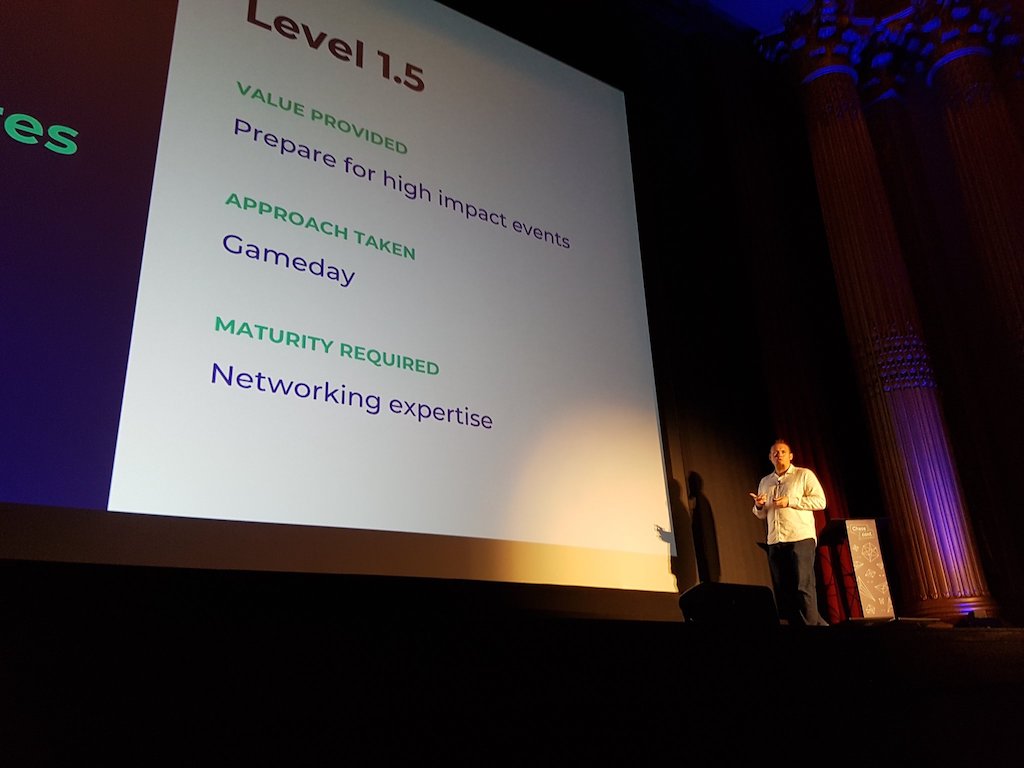

Andrus, CEO of Gremlin, opened the event by discussing the range of chaos experiments he has seen develop over time within the industry. He classified “level 0” experiments as preparing for host failures in the cloud. This requires low maturity, and typically uses tooling such as Chaos Monkey to randomly inject host failures into a system. As practice matures the implementation of “level 1” and “level 1.5” experiments become more disciplined, with additional focus being placed on experimenting with network failure. This requires networking expertise and more advanced operational maturity.

The human and organisation aspects of handling failure also become more of a focus in level 1.5. Experimentation here is often achieved by running “gamedays” that provide training and simulate failure in order to observe how people react under a realistic situation. Andrus cautioned that not all organisations realise the value of developing organisational responses to failure and training staff appropriately:

Many of the companies I have worked at implement their on-call training as “here is your pager and dashboard — good luck”. This isn’t acceptable

Next, Andrus made the argument that host tests and network level tests at OSI layer 3 and 4 are not sufficient for many organisations that want to run chaos experiments, as finer granularity is required in order to limit the targeted impact and safely test applications. He stated that “operators often think is terms of requests“, and in order for request-level data and metadata to be used to selectively control tests and experiments, application-level (layer 7) awareness is required within tooling.

At this point in the talk the new Application Level Failure Injection (ALFI) product from Gremlin was announced. ALFI supports “level 2” experimentation by facilitating request-level precision for targeting the impact of an experiment. This is achieved by specifying “coordinates” within the system and matching experiments to be run against a set of targets. Coordinates include application concerns such as user identifier or A/B tests, and platform concerns such as service or geographical region. An engineer can also define their own coordinates using a custom implementation.

Andrus concluded his talk by stating that targeted coordinates can be used to minimise the potential “blast radius” of an experiment and also to reproduce production outages without disturbing the entire system. Experiments should be safely scaled in an iterative fashion:

- Validating the user experience using a test user or device

- Run for 1% of traffic, measure the impact

- Repeat for 10% of traffic

- Scale up to 25%, 50%, 100%

Outages can also be reproduced using a similar pattern:

- When an outage occurs, define a hypothesis as to why

- Create an experiment that runs against, for example, a single test user account

- Login as the test user and load the page/application

- Find the logs/evidence and validate the hypothesis

- Create a pull request to fix the issue

More details on the inaugural ChaosConf can be found on the conference website, and the video recordings for all of the talks are available on the Gremlin YouTube “ChaosConf 2018” channel.