MMS • RSS

Article originally posted on InfoQ. Visit InfoQ

Key Takeaways

- With emerging technologies like machine learning, developers can now achieve much more than ever before. But this new power has a down side.

- When we talk about ethics – the principles that govern a person’s behaviour – it is impossible to not talk about psychology.

- Processes like obedience, conformity, moral disengagement, cognitive dissonance and moral amnesia all reveal why, though we see ourselves as inherently good, in certain circumstances we are likely to behave badly.

- Recognising that although people aren’t rational, they are to a large degree predictable, has profound implications on how tech and business leaders can approach making their organisations more ethical.

- The strongest way to make a company more ethical is to start with the individual. Companies become ethical one person at a time, one decision at a time. We all want to be seen as good people, known as our moral identity, which comes with the responsibility to have to act like it.

With emerging technologies like machine learning, developers can now achieve much more than ever before. But this new power has a down side. Only recently, Facebook’s Chief Executive apologised in front of the European Parliament for not taking enough responsibility for fake news, foreign interference in elections and developers misusing people’s information. Google then announced its Pentagon AI project, triggering a dozen resignations from its development teams. When writing code, where does your responsibility start? And where does it end? Are your only options to stay and get on with it or quit?

When we talk about ethics – the principles that govern a person’s behaviour – it is impossible to not talk about psychology. One major field has contributed the most when it comes to researching this subject: Social Psychology, or the study of human behaviour in social situations. It aims to explain why we behave in a certain way in certain circumstances.

What Influences Our Behaviour?

On Obedience

One of the earliest theories on the human psychology of ethics came from Stanley Milgram, a psychologist with Yale University in the 1960’s. As he was examining justifications for acts of genocide offered by those accused at the World War II Nuremberg War Criminal trials, he found that their defense often was based on “obedience” – that they were just following orders from their superiors.

Milgram (1963) was interested in researching how far people would go in obeying an instruction if it involved harming another person. He set up his first experiment in July 1961, a year after the trial of Adolf Eichmann in Jerusalem. At first he wanted to investigate whether Germans were particularly obedient to authority figures, as this was a common explanation for the Nazi killings in World War II. But he soon discovered all people responded the same way.

Experiments

In his first experiments, volunteers were introduced to another participant (who was in on the experiment). Their roles were defined by drawing straws (even though this was fixed). The volunteer would always be the teacher and the confederate would always be the learner.

They were then both taken to separate rooms, the learner would then be strapped to an electric chair and ordered to learn a list of word pairs. The teacher, positioned in a room with an electric shock generator, would then test the learner by naming a word and asking the learner to recall its pair. The learner was primed to give mainly wrong answers. The teacher was instructed to give an electric shock for every mistake and to increase the shock level with each error (note: no real shock were giving, merely the illusion that the teachers were giving shocks). The range of shocks started at 15V and led up to 450V. When the teacher refused to administer a shock, the experimenter was to give a series of orders to ensure they continued.

65% of the participants were willing to give the strongest, apparently deadly, shock (450V). All participants continued to 300V. Milgram concluded that ordinary people were likely to follow orders given by an authority figure, even to the extent of killing an innocent human being. Obedience to authority is ingrained in us all from the way we are brought up. Especially if we recognise the authority as morally or legally right, this response is learned from a very young age in school, family and work situations.

Many of the studies that followed after Milgram’s Electric Shock experiments, have reported even higher obedience rates than those seen in Milgram’s American samples. Some studies had obedience rates of over 80% (from Italy, Germany, Austria, Spain, and Holland.)” Weiten 2010.

On Conformity

We like to think of ourselves as strong enough to stand up to a group when we know we are right, right?

Yet research has shown us we are often more prone to conform then we would like. In certain cases people are willing to ignore reality in order to conform to the rest of the group.

Experiments

In the 1950’s Solomon Asch, a Polish gestalt psychologist, conducted a series of experiments (Asch Experiments). The experiments revealed the degree to which a person’s own opinions are influenced by those of the group. Asch found that people were willing to knowingly give an incorrect answer in order to conform.

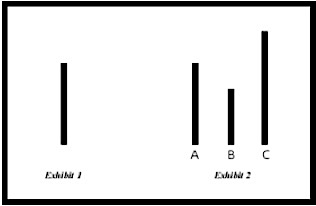

In each experiment, a naive student participant was placed in a room with several other confederates who were “in on” the experiment. The subjects were told that they were taking part in a vision test. The confederates were all told what their responses would be when the line task was presented. The naive participant, however, was not aware that the other students were not real participants. After the line task was presented, each student was to verbally announce which line (either A, B, or C) matched the target line.

Find the match

The experiments revealed the degree to which a person’s own opinions are influenced by those of groups. Asch found that nearly 75% of the people were willing to ignore reality and give an incorrect answer in order to conform to the rest of the group, and avoid ridicule. So Asch demonstrated that we will lie or deceive ourselves in order to fit in. We have a strong need not to be different.

How Can We Still Sleep At Night?

We encounter moral situations frequently (Hofman et.al, 2014). Indeed, morality is “a uniquely human characteristic—one that sets us apart from other species” (Goodwin et al , 2014).

Because morality is such a fundamental part of human existence, people have a strong incentive to view themselves and be viewed by others as moral individuals. However, when encountering an opportunity to act dishonestly and benefit from it, studies have shown that we often choose to diverge from our moral compass and cheat (M. Kouchaki and F. Gino 2016).

Ellen Klass, a psychologist from New York, found that when we have done something ethically dubious, we tend to ruminate over it which often leads to fragmented or disrupted sleep. However, whereas some people toss and turn at night worrying about something, others seem not that bothered. Why not?

Moral Disengagement is a term from social psychology for the process of convincing the self that ethical standards do not apply to oneself in a particular context. Thus, moral disengagement involves a process of cognitive re-construing or re-framing of destructive behavior as being morally acceptable without changing the behavior or the moral standards.

In their research Baron & Zhao found that personal motives, mainly financial gain, impact our moral disengagement. This explains how entrepreneurs sometimes make unethical decisions that have devastating effects on their companies, stakeholders, and themselves.

On Cognitive Dissonance

American social psychologist Leon Festinger’s 1957 Cognitive Dissonance theory has been described as social psychology’s most notable achievement.

The theory suggests that we have an inner drive to hold all our attitudes and behavior in harmony and avoid disharmony (or dissonance). This is known as the principle of cognitive consistency. When there is an inconsistency between our attitudes and our behavior (dissonance), something must change to eliminate the dissonance.

Cognitive dissonance refers to a situation involving conflicting attitudes, beliefs or behaviors. This produces a feeling of discomfort leading to an alteration in one of either attitude, beliefs or behavior to reduce the discomfort and restore balance.

Experiment:

Festinger and James M. Carlsmith published their classic cognitive dissonance experiment in 1959. In the experiment, subjects were asked to perform an hour of boring and monotonous tasks (i.e., repeatedly filling and emptying a tray with 12 spools and turning 48 square pegs in a board clockwise). Some subjects, who were led to believe that their participation in the experiment had concluded, were then asked to perform a favor for the experimenter by telling the next participant, who was actually a confederate, that the task was extremely enjoyable. Dissonance was created for the subjects performing the favor, as the task was in fact boring. Half of the paid subjects were given $1 for the favor, while the other half of the paid subjects received $20.

According to behaviorist/reinforcement theory, those who were paid $20 should like the task more because they would associate the payment with the task. Cognitive dissonance theory, on the other hand, would predict that those who were paid $1 would feel the most dissonance since they had to carry out a boring task and lie to an experimenter, all for only 1$. This would create dissonance between the belief that they were not stupid or evil, and the action which is that they carried out a boring tasked and lied for only a dollar. Therefore, dissonance theory would predict that those in the $1 group would be more motivated to resolve their dissonance by reconceptualizing/rationalizing their actions. They would form the belief that the boring task was, in fact, pretty fun. As you might suspect, Festinger’s prediction, that those in the $1 would like the task more, proved to be correct.

Note this experiment also demonstrates that ethics is a rather tricky subject, cognitive dissonance is about worrying your behaviour was illogical, not unethical – it doesn’t stop you behaving badly, just inexplicably.

Example:

To use Festinger’s example of a smoker who has knowledge that smoking is bad for his health, the smoker may reduce dissonance by choosing to quit smoking, by changing his thoughts about the effects of smoking (e.g., smoking is not as bad for your health as others claim), or by acquiring knowledge pointing to the positive effects of smoking (e.g., smoking prevents weight gain).

Unethical behaviour:

In the process of creating balance we make choices to make us feel better about ourselves, and sometimes our moral compass suffers.

On Unethical Amnesia

Unethical Amnesia was described by researchers from Northwestern University and Harvard University as a phenomenon they believed stemmed from the fact that memories of ourselves acting in ways we shouldn’t are uncomfortable.

Their hypothesis was that people limit their retrieval of memories that could threaten their moral self concept. In other words, we don’t like to see our self as bad. They conducted 9 different studies with more than 2100 participants: over the duration of the study they saw that people remember the times they acted ethically, like playing a game fairly, more clearly than the times they cheated.

Francesca Gino and Maryam Kouchaki (psychologists) concluded that unethical behavior creates psychological distress and discomfort, and unethical amnesia lowers it. They hypothesized we forget things we’ve done that are contrary to our self image and therefore the unethical behaviours reoccur: we do not learn from the past.

Processes like obedience, conformity, moral disengagement, cognitive dissonance and moral amnesia all reveal why, though we see ourselves as inherently good, in certain circumstances we are likely to behave badly.

This is important. Recognising that although people aren’t rational, they are to a large degree predictable, has profound implications on how tech and business leaders can approach making their organisations more ethical.

What Can Be Done?

The traditional approach to change is top-down: get the company’s leaders to make the change their priority, and role model the behaviour they want everyone else to follow.

A quicker, more effective way is to start with the individual, whether a leader or an employee, and their powerful desire to see themselves and be seen by others as a good person.

In his study McLaverty (Dr of Education) looked at the influence of culture on ethical decision making among leaders and executives of big companies. His narrative research techniques give us some interesting insights and solutions for these issues.

In his study he found that the leader recalled a total of 87 major ethical dilemmas. Only a few of these incidents were caused by bribery, corruption or anti competition issues (only 16%).

More often the dilemmas were the result of competing interest, misaligned incentives or clashing cultures.

Based on these findings, McLaverty identified a number of obstacles and contradictions that need to be addressed if you want to make an impact on the ability of your teams to act ethically:

On Obstacles

- Business transformation programs and change management initiatives: Companies can warp their own ethical climate by pushing too much change from the top, too quickly and too frequently. People who are rushed or flustered are more likely to act unethically.

- Incentives and pressure to inflate achievement of targets: People do what they are rewarded to do, and most leaders are rewarded for hitting targets.

- Cross-cultural differences. The constant challenge of deciding whose cultural “rules” were paramount when making business decisions drowned out people’s internal ethical voices.

Knowing these obstacles and recognising they stand in the way of making ethical decisions we can start overcoming them. What has worked for the leaders in McLaverty’s study?

As an organisation/leader you can think about:

- How people are paid: make sure your compensation scheme rewards the right things.

- Who gets promoted and why: value people who reflect on ethical issues, who speak up and challenge accepted ways of doing things.

- How employees feel about the company: we want to work for businesses we can be proud of. If your engagement surveys show that people don’t trust managers, or that employees are disengaged and ashamed of the company, you might have a widespread ethical problem on your hands.

- Whether decisions are ethical enough: usually, ethics are just one factor in a decision. A leader who acknowledges this by, for example, talking about the dilemmas they’ve had to wrestle with, can re-shape the whole organisation.

It Matters To Me

But being ethical is not only an option if you are the CEO of a successful multinational corporation. As an individual you can make an impact even if the top of the organisation is not on board or the effects are taking time to trickle down.

The strongest base to start making a company more ethical is to start with the individual. Companies become ethical one person at a time, one decision at a time. We all want to be seen as good people, known as our moral identity, which comes with the responsibility to have to act like it, even as our memories of our less ethical doings will fade over time.

We have to believe that doing the right thing is the only option. Luckily ethics comes with strong emotions like guilt, fear, regret and pride. Learning to recognise and not ignore these emotions helps build the self-belief to act and strengthen that inner compass.

On Speaking Up

Last but not least, if something needs to change, you need to speak up. If you see something that is going wrong, it is time to be brave and say something. Decide whether you will speak to your boss, your team lead or an advisory function (compliance or human resource). Talk to your personal network for support and guidance.

It will take courage, for some more than others, as speaking up will not always come without risks: a survey in the UK found that 6 in 10 bankers still feared negative consequences for flagging unethical behaviour. In tech, the stackoverflow results still showed that reporting an ethical problem would depend on the particulars of a situation (46.6%).

In 300 BC it was Aristotle who said that fear was an innate part of courage. As psychologists now describe it: courage is persistence in the face of fear.

Do not underestimate the positive consequences of being courageous and speaking out: you will build increased self respect, improved self confidence and a more ethical work climate.

But perhaps more important, being courageous does makes you happier.

About the Authors

Andrea Dobson is registered psychologist and a cognitive behavioural therapist. As a practicing psychologist, Andrea specialised in depression and anxiety disorders, complex grief and forensic psychology. Andrea started working at Container Solutions in 2015 to expand their learning culture. This involved coaching, executive education and formalising the hiring process whilst expanding it to include psychometric testing. This work continued in 2017 when Andrea created CS’s leadership development programme. In 2018, Andrea started working for the Innovation Office working with Pini Reznik, the founder of Container Solutions, to link patterns of consumer behaviour with their latest product development efforts.

Andrea Dobson is registered psychologist and a cognitive behavioural therapist. As a practicing psychologist, Andrea specialised in depression and anxiety disorders, complex grief and forensic psychology. Andrea started working at Container Solutions in 2015 to expand their learning culture. This involved coaching, executive education and formalising the hiring process whilst expanding it to include psychometric testing. This work continued in 2017 when Andrea created CS’s leadership development programme. In 2018, Andrea started working for the Innovation Office working with Pini Reznik, the founder of Container Solutions, to link patterns of consumer behaviour with their latest product development efforts.

References

- S.E. Asch (1956). Studies of independence and conformity: I. A minority of one against a unanimous majority. Psychological Monographs: General and Applied, Vol 70(9),, 1-70.

- T.C. McLaverty (2016). The influence of culture on senior leaders as they seek to resolve ethical dilemmas at work

- Klass, E. T. (1978). Psychological effects of immoral actions: The experimental evidence. Psychological Bulletin, 85(4), 756

- Festinger, L. (1957). A Theory of cognitive dissonance. Stanford, CA: Stanford University Press.

- M. Kouchaki & F. Gino (2016). Memories of unethical actions become obfuscated over time. PNAS May 31, 2016. 113 (22) 6166-6171

- Hofmann W., Wisneski DC., Brandt, M.J. and Skitka, L.J. (2014). Morality in everyday life. Science 345(6202):1340–1343.

- Goodwin, G.P., Piazza, J., Rozin, P. (2014). Moral character predominates in person perception and evaluation. J Pers Soc Psychol 106(1):148–168.

- Festinger & Carlsmith (1959). Cognitive consequences of forced compliance. Journal of Abnormal and Social Psychology, 58, 203 – 210

- Milgram, S. (1963). Behavioral study of obedience. Journal of Abnormal and Social Psychology, 67, 371-378 .

- W. Weiten (2010). Psychology: themes and variations.