MMS • RSS

Article originally posted on InfoQ. Visit InfoQ

Key Takeaways

- The microservices architectural style simplifies implementing individual services. However, connecting, monitoring and securing hundreds or even thousands of microservices is not simple.

- A service mesh provides a transparent and language-independent way to flexibly and easily automate networking, security, and observation functions. In essence, it decouples development and operations for services.

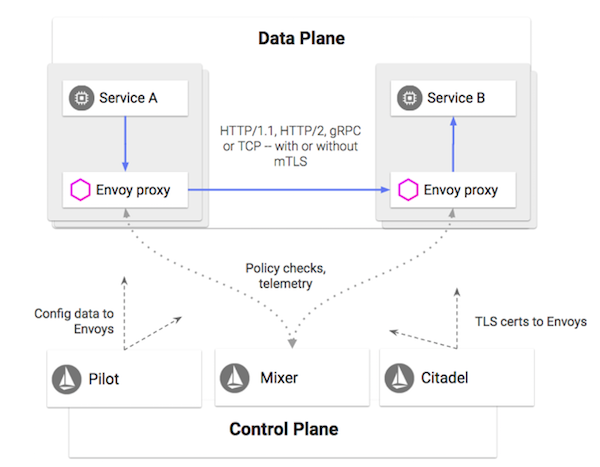

- The Istio service mesh is split into 1) a data plane built from Envoy proxies that intercepts traffic and controls communication between services, and 2) a control plane that supports services at runtime by providing policy enforcement, telemetry collection, and certificate rotation.

- The near-term goal is to launch Istio to 1.0, when the key features will all be in beta (including support for Hybrid environments)

- The long-term vision is to make Istio ambient.

It wouldn’t be a stretch to say that Istio popularized the concept of a “service mesh”. Before we get into the details on Istio, let’s briefly dive into what a service mesh is and why it’s relevant. We all know the inherent challenges associated with monolithic applications, and the obvious solution is to decompose them into microservices. While this simplifies individual services, connecting, monitoring and securing hundreds or even thousands of microservices is not simple. Until recently, the solution was to string them together using custom scripts, libraries and dedicated engineers tasked with managing these distributed systems. This reduces velocity on many fronts and increases maintenance costs. This is where a service mesh comes in.

A service mesh provides a transparent and language-independent way to flexibly and easily automate networking, security, and telemetry functions. In essence, it decouples development and operations for services. So if you are a developer, you can deploy new services as well as make changes to existing ones without worrying about how that will impact the operational properties of your distributed systems. Similarly, an operator can seamlessly modify operational controls across services without redeploying them or modifying their source code. This layer of infrastructure between services and their underlying network is what is usually referred to as a service mesh.

Within Google, we use a distributed platform for building services, powered by proxies that can handle various internal and external protocols. These proxies are supported by a control plane that provides a layer of abstraction between developers and operators and lets us manage services across multiple languages and platforms. This architecture has been battle-tested to handle high scalability, low latency and provide rich features to every service running at Google.

Back in 2016, we decided to build an open source project for managing microservices that in a lot of ways mimicked what we use within Google, which we decided to call “Istio”. Why the name? Because Istio in Greek means “sail”, and Istio started as a solution that worked with Kubernetes, which in Greek means “helmsman” or “pilot”. It is important to note that Istio is agnostic to deployment environment, and was built to help manage services running across multiple environments.

Around the same time that we were starting the Istio project, IBM released an open source project called Amalgam8, a content-based routing solution for microservices that was based on NGINX. Realizing the overlap in our use cases and product vision, IBM agreed to become our partner in crime and shelve Amalgam8 in favor of building Istio with us, based on Lyft’s Envoy.

How does Istio work?

Broadly speaking, an Istio service mesh is split into 1) a data plane built from Envoy proxies that intercepts traffic and controls communication between services, and 2) a control plane that supports services at runtime by providing policy enforcement, telemetry collection, and certificate rotation.

Illustration: Dan Ciruli, Istio PM

Proxy

Envoy is a high-performance, open source distributed proxy developed in C++ at Lyft (where it handles all of their production traffic). Deployed as a sidecar, Envoy intercepts all incoming and outgoing traffic to apply network policies and integrate with Istio’s control plane. Istio leverages many of Envoy’s built-in features such as discovery and load balancing, traffic splitting, fault injection, circuit breakers and staged rollouts.

Pilot

As an integral part of the Control Plane, Pilot manages proxy configuration and distributes service communication policies to all Envoy instances in an Istio mesh. It can take high level rules (like roll-out policies), translate them into low-level Envoy configuration and push them to sidecars with no downtime or redeployment necessary. While Pilot is agnostic to the underlying platform, operators can use platform specific adapters to push service discovery information to Pilot.

Mixer

Mixer integrates a rich ecosystem of infrastructure backend systems into Istio. It does this through pluggable set of adapters using a standard configuration model that allows Istio to be easily integrated with existing services. Adapters extend Mixer’s functionality and expose specialized interfaces for monitoring, logging, tracing, quota management, and more. Adapters are loaded on-demand and used at runtime based on operator configuration.

Citadel

Citadel (previously known as Istio Auth) performs certificate signing and rotation for service-to-service communication across the mesh, providing mutual authentication as well as mutual authorization. Citadel certificates are used by Envoy to transparently inject mutual TLS on each call, thereby securing and encrypting traffic using automated identity and credential management. As is the theme throughout Istio, authentication and authorization can be configured with minimum to no service code changes and will seamlessly work across multiple clusters and platforms.

Why use Istio?

Istio is highly modular and is used for a variety of use cases. While it is beyond the scope of this article to cover every single benefit, let me provide a glimpse of how it can simplify the day-to-day life of netops, secops and devops.

Resilience

Istio can shield applications from flaky networks and cascading failures. If you are a network operator, you can systematically test the resiliency of your application using features like fault injection to inject delays and isolate failures. If you want to migrate traffic from one version of a service to another, you can reduce the risk by doing the rollout gradually through weight-based traffic routing. Or even better, you can mirror live traffic to a new deployment to observe how it behaves before doing the actual migration. You can use Istio Gateway to load-balance the incoming and outgoing traffic and apply route rules like timeouts, retries and circuit breaks to reduce and recover from potential failures.

Security

One of the main Istio use cases is securing inter-service communications across heterogeneous deployments. In essence, security operators can now uniformly and at scale enable traffic encryption, lock down access to a service without breaking other services, ensure mutual identity verification, whitelist services using ACLs, authorize service-to-service communication and analyze the security posture of applications. They can implement these policies across the scope of a service, namespace or mesh. All of these features can reduce the need for layers of firewalls and simplify the job of a security operator.

Observability

The ability to visualize what’s happening within your infrastructure is one of the main challenges of a microservice environment. Until recently individual services had to be extensively instrumented for end-to-end monitoring of service delivery. And unless you have a dedicated team willing to tweak every binary, getting a holistic view of the entire fleet and troubleshooting bottlenecks can be very cumbersome.

With Istio, out of the box, you get visualization of key metrics and the ability to trace the flow of requests across services. This allows you to do things like enabling autoscaling based on application metrics. While Istio supports a whole host of providers like Prometheus, Stackdriver, Zipkin and Jaeger, it is backend agnostic. So if you don’t find what you are looking for, you can always write an adapter and integrate it with Istio.

Where is Istio now?

New features are being continuously added while we are stabilizing and improving the existing ones. In true agile style, Istio features individually go through their own lifecycle (dev/alpha/beta/stable). Although we are still tinkering around with some functionality, there are a bunch of features that are ready for production use (beta/stable). Check out the latest feature list on istio.io.

Istio has a rigorous release cadence. While daily and weekly releases are available, they are not supported and may not be reliable. The monthly snapshots, on the other hand, are relatively safe and are usually packed with new features. However, if you are looking to use Istio in production, look for releases that have the tag “LTS” (Long Term Support). As of this writing, 0.8 is the latest LTS release. You can find that release and all the other versions on GitHub.

What is next?

It’s been a year since Istio 0.1 was officially launched at GlueCon. While we have come far, there is still a lot more ground to cover. The near-term goal is to launch Istio to 1.0, when the key features will all be in beta (and in some cases stable). It’s important to note that this is not 100% of Istio features, just what we consider the most important features based on feedback from the community. We are also rigorously working on improving nonfunctional requirements like performance and scalability for this launch, as well as improving our documentation and getting started experiences.

One of the primary goals for Istio is support for hybrid environments. As an example, someday users could run VMs in GCE, an on-prem Cloud Foundry cluster, and other services in another public cloud, and Istio would provide them with a holistic view of their entire service fleet, as well as the means to operate and secure connections between them. There is work in progress to enable multi-cluster architecture in which you can join multiple Kubernetes clusters into a single mesh and enable service discovery across clusters on a flat network; this work is in alpha in the 0.8 LTS release. In the not so distant future, it will also support global ingress for cluster level load balancing, as well as support for non-flat networks using Gateway peering.

Another area of focus beyond our 1.0 release is API management capabilities. As an example, we intend to launch a service broker API that will help connect service consumers with service operators by enabling discovery and provisioning of individual services. We will also provide a common interface for API management features like API business analytics, API key validation, auth validation (JWT, OAuth, etc), transcoding (JSON/REST to gRPC), routing and integration with various API management systems like Google Endpoints and Apigee.

All these near-term goals pave the path towards our long-term vision, which is to make Istio ambient. As Sven Mawson, our technical lead and Istio founder puts it, “We want to reach a future state where Istio is woven into every environment, with service management available no matter what environment or platform you use.”

While it’s still early days, the speed of development and adoption has been accelerating. From major cloud providers to independent contributors, Istio has already become synonymous with service mesh, and an integral part of infrastructure roadmap. With every launch we are getting closer to the new reality.

About the Author

Jasmine Jaksic works at Google as the lead technical program manager on Istio. She has 15 years of experience building and supporting various software products and services. She is a cofounder of Posture Monitor, an application for posture correction using 3D camera. She is also a contributing writer for The New York Times, Wired and Huffington Post. Follow her on Twitter: @JasmineJaksic.

Jasmine Jaksic works at Google as the lead technical program manager on Istio. She has 15 years of experience building and supporting various software products and services. She is a cofounder of Posture Monitor, an application for posture correction using 3D camera. She is also a contributing writer for The New York Times, Wired and Huffington Post. Follow her on Twitter: @JasmineJaksic.