MMS • RSS

Article originally posted on InfoQ. Visit InfoQ

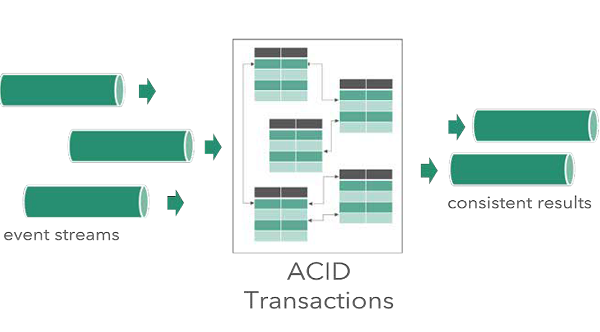

Data Artisans has announced the general availability of Streaming Ledger, which extends Apache Flink with capabilities to perform serializable ACID transactions across tables, keys, and event streams. The patent-pending technology is a proprietary add-on for Flink and allows going beyond the current standard where operations could only consistently work on a single key at a time.

Before this announcement, stream processing projects, like Flink and Spark, only offered exactly once processing, consistently operating on a single key. However, with the release of data Artisans Streaming Ledger, Flink now allows for working beyond event state boundaries, while still guaranteeing ACID transactions. ACID is an acronym for the critical components of transactional systems.

- Atomicity: The transaction applies all changes atomically. The transaction function executes either all or none the modifications on the rows.

- Consistency: The transaction function brings the tables from one consistent state into another consistent state.

- Isolation: Each transaction executes as it were the only transaction operating on the tables.

- Durability: The changes made by a transaction are durable and never get lost.

Transactions implemented according to the ACID principles execute as a single operation, which either completes or fails in its entirety. Consequently, this ensures data consistency, even during the occurrence of outages, application errors and the like. A commonly used example of a transaction that requires the ACID principles is the transferal of funds from one bank account to another. Although Streaming Ledger is the first implementation of ACID transactions in stream processing, it has already been around for a long time in relational database systems like SQL Server and Oracle.

Source: https://data-artisans.com/wp-content/uploads/2018/08/2018-08-31-dA-Streaming-Ledger-whitepaper.pdf

Founded by the original creators of Apache Flink, an open source stream processing framework, the data Artisans company provides a stream processing infrastructure with the data Artisans Platform, also known as the dA Platform. The platform consists of Apache Flink, dA Application Manager, and now Streaming Ledger as well. The company works in the stream processing space, which Srinath Perera, vice president of Research at WSO2, describes as a big data technology, and allows querying streams of data and making decisions on their information.

Stream Processing is a Big data technology. It enables users to query continuous data stream and detect conditions fast within a small time period from the time of receiving the data. The detection time period may vary from few milliseconds to minutes. For example, with stream processing, you can receive an alert by querying a data streams coming from a temperature sensor and detecting when the temperature has reached the freezing point.

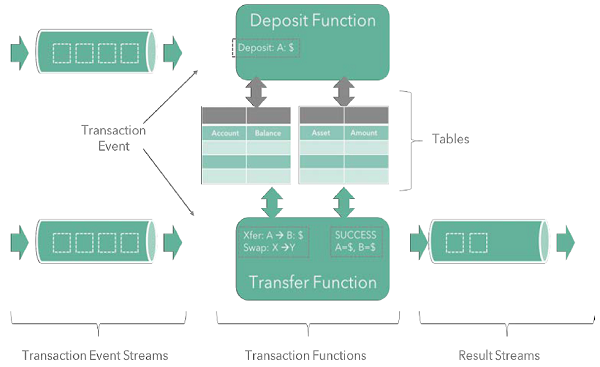

Along with the announcement, data Artisans released a white paper, which goes into the details and architecture of Streaming Ledger. For example, we find that the architecture consists of four fundamental building blocks. Tables maintain the state of an application, transaction functions update the tables, transaction event streams drive the transactions, and optional result streams emit events around the success or failure of the streams. Furthermore, the tables are isolated from concurrent changes when modified in a transaction. As a result, this setup ensures data consistency even when working across streams.

Source: https://data-artisans.com/wp-content/uploads/2018/08/2018-08-31-dA-Streaming-Ledger-whitepaper.pdf

Data Artisans also provides a GitHub repository, which offers steps to build Streaming Ledger from source or obtain it from Maven Central. Additionally, the repository also contains several samples to get started with the project, like the deep-going SimpleTrade example that illustrates the use of a stream ledger.