Data Science is Changing and Data Scientists will Need to Change Too – Here’s Why and How

MMS • RSS

Article originally posted on Data Science Central. Visit Data Science Central

Summary: Deep changes are underway in how data science is practiced and successfully deployed to solve business problems and create strategic advantage. These same changes point to major changes in how data scientists will do their work. Here’s why and how.

There’s a sea change underway in data science. It’s changing how companies embrace data science and it’s changing the way data scientists do their job. The increasing adoption and strategic importance of advanced analytics of all types is the backdrop. There are two parts to this change.

There’s a sea change underway in data science. It’s changing how companies embrace data science and it’s changing the way data scientists do their job. The increasing adoption and strategic importance of advanced analytics of all types is the backdrop. There are two parts to this change.

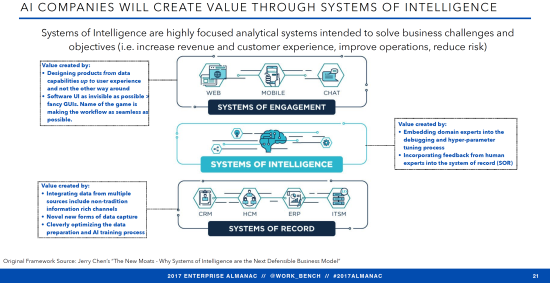

One is what is happening right now as analytic platforms build out to become one-stop shops for data scientists. But the second and more important is what is just beginning but will now take over rapidly. Advanced analytics will become the hidden layer of Systems of Intelligence (SOI) in the new enterprise applications stack.

Both these movements are changing the way data scientists need to do their jobs and how we create value.

What’s Happening Now

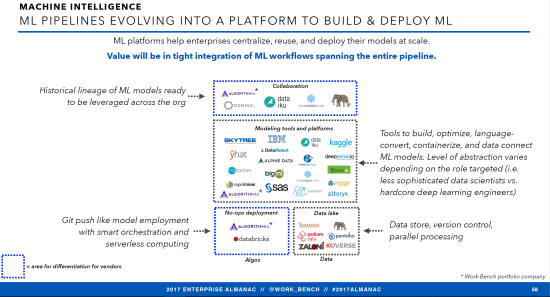

Advanced analytic platforms are undergoing several evolutionary steps at once. This is the final buildout in the current competitive strategy being used by advanced analytic platforms to capture as many data science users as possible. These last steps include:

- Full integration from data blending, through prep, modeling, deployment, and maintenance.

- Cloud based so they can expand and contract their MPP resources as required.

- Expanding capabilities to include deep learning for text, speech, and image analysis.

- Adopting higher and higher levels of automation in both modeling and prep reducing data science labor and increasing speed to solution. Gartner says that within two years 40% of our tasks will be automated.

Here are a few examples I’m sure you’ll recognize.

- Alteryx with roots in data blending is continuously upgrading its on-board analytic tools and expanding access to third party GIS and consumer data such as Experian.

- SAS and SPSS have increased blending capability, incorporated MPP, and most recently added enhanced one-click model building and data prep options.

- New entrants like DataRobot emphasize labor savings and speed-to-solution through MPP and maximum one-click automation.

- The major cloud providers are introducing complete analytic platforms of their own to capture the maximum number of data science users. These include Google’s Cloud Datalab, Microsoft Azure, and Amazon SageMaker.

The Whole Strategic Focus of Advanced Analytic Platforms is About to Change

We are in the final stages of large analytics users wanting to assemble different packages in a best of breed strategy. Gartner says users, starting with the largest will increasingly consolidate around a single platform.

These same consolidation forces were at work in ERP systems in the 90s or DW/BI, and CRM systems in the 00s. Give the customer greater efficiency and ease of use with a single vendor solution creating a wide moat of good user experience combined with painful high switching costs.

This is only the end of the last phase and not where advanced analytic platforms are headed over the next two to five years. So far the emphasis has been on internal completeness and self-sufficiency. According to both strategists and Venture Capitalists the next movement will see the advanced analytic platform disappear into an integrated enterprise stack as the critical middle System of Intelligence.

Why the Change in Strategy – and When?

The phrase Systems of Intelligence (SOI) was first used by Microsoft CEO Satya Nadella in early 2015. However it wasn’t until 2017 that the strategy of creating wide moats using SOI was articulated by venture capitalist Jerry Chen at Greylock Partners.

Suddenly Systems of Intelligence is on everyone’s tongue as the next great generational shift in enterprise infrastructure, the great pivot in the ML platform revolution.

Where current Advanced Analytic Platform strategies rely on being the one-stop general-purpose data science platform of choice, those investing and developing the next generation of platforms say that is about to change. That the needs of each industry, or the needs of each major business process like finance, HR, ITSM, supply chain, ecommerce, and others have become so specialized in terms of their data science content that wide moats are best constructed by making the data science disappear as the middle layer between systems of record and systems of engagements.

As Chen states, “Companies that focus too much on technology without putting it in context of a customer problem will be caught between a rock and a hard place”. As an investor he would say that he is unwilling to back a general purpose DS platform for that very reason.

Chen and many others are investing directly on the basis of these thoughts that the future of data science, machine learning, and AI is as the invisible secret sauce middle layer. No one cares exactly how the magic is done, so long as your package arrives on time, or the campaign is successful, or whatever insight the DS has provided proves valuable. It’s all about the end user.

From the developer’s and investor’s point of view, this strategy is also the only forward path to deliver measurable and lasting competitive differentiation. The treasured wide moat.

So in the marketplace the emphasis is on the system of engagement. Look at Slack, Amazon Alexa, and every other speech /text /conversational UI startup that uses ML as the basis for its interaction with the end user. In China, Tencent and Alibaba have almost completely dominated ecommerce, gaming, chat, and mobile payments by focusing on improving their system of engagement through advanced ML.

It’s also true that systems of engagement experience more rapid evolution and turnover than either the underlying ML or the systems of records. So it’s important that in this new enterprise stack the ML be able to work with a variety of existing and new systems of engagement and also systems of record.

The old methods of engagement don’t disappear but new ones are added. In fact being in control of the end user and being compatible with multiple systems of records provides access to the flow of data that will allow the ML SOI to constantly improve enhancing your dominant position.

Here’s how Chen and other SOI enthusiasts see the market today.

How Does this Change the Way Data Scientists Work?

So why does this matter to data scientists and how will it change the way we perform our tasks? Gartner says that by 2020 more than 40% of data science tasks will be automated. There are two direct results:

Algorithm Selection and Tuning Will No Longer Matter

It will be automated. It will no longer be one of the data scientist’s primary tasks. We see the movement to automating model construction all around us from the automated modeling features in SPSS to the fully automated modeling platforms like DataRobot.

Our ability to try various algorithms including our hands-on ability to tune hyperparameters will very rapidly be replaced by smart automation. The amount of time we need to spend on this part of the project is dramatically reduced and will no longer be the best and most effective use of our expertise.

Data Prep will be Mostly Automated

Data prep for the most part will be automated and in some narrowly defined instances can be completely automated. This problem is actually much more difficult to totally automate than model creation. However you can already utilize automated data prep in tools as diverse as SPSS and Xpanse Analytics. Right now, of the many steps in prep at least the following can be reliably automated:

- Blending data sources.

- Profile the data for initial discovery.

- Recode missing and mislabeled values.

- Normalize the data distribution.

- Run univariate analyses.

- Bin categoricals.

- Create N-grams from text fields.

- Detect and resolve outliers.

If you’ve experienced any of these automated prep tools you know that today they’re not perfect. Give them a little time. This step alone eliminates all the unpleasant grunt work and lower level time and labor in ML.

Who You Want to Work For

The Systems of Intelligence strategy shift raises another interesting change. It probably impacts who you want to work for. One of the great imbalances in the shortage of the best data scientists is that such a high percentage work for tech companies mostly engaged in one-size-fits-all platforms. Certainly one implication is that we may want to search out industry or process vertical solution developers who will be the primary beneficiaries of this major change.

What’s Left for the Data Scientist to do?

Whether you’ve been in the industry for long or are fresh out of school you’ve been intently focused on data prep, model selection, and tuning. For many of us these are the tasks that define our core skill sets. So what’s left?

This isn’t as dark as it seems. We shift to the higher value tasks that were always there but represented a much smaller percentage of our work.

Feature Engineering and Model Validation Become a Focus

In all the automation of prep so far there have been some attempts to automate feature engineering (feature creation) by for example taking the difference in all the possible date fields, creating all the possible ratios among variables, looking at trending of values, and other techniques. These have been brute force and tend to create lots of meaningless engineered features.

It is your knowledge of both data science and particularly the industry specific domain knowledge that will keep the creation and selection of important new predictive engineered features a major part of our future efforts.

Your expertise will also be required at the earliest stages of data examination to ensure the automation hasn’t gone off the rails. It’s pretty easy to fool today’s automated prep tools into believing data may be linear when in fact it may be curvilinear or even non-correlated (I’m thinking Anscombe’s Quartet here). It still takes an expert to validate that the automation is heading in the right direction.

Your Understanding of the Business Problem to be Solved

If you are working inside a large corporation as part of the advanced analytics team then your ability to correctly understand the business problem and translate that into a data science problem will be key.

If you are working under the SOI strategy and trying to solve a cross industry process problems (HR, finance, supply chain, ITSM) or even if you are working with a more narrowly defined industry vertical (e.g. ecommerce customer engagement) it will be your knowledge and understanding of the end users experience that will be valued.

Even today progress as a data scientist requires deep domain knowledge of your specialty process or industry. Knowledge of the data science required to implement the solution is not sufficient without domain knowledge.

Machine Learning Will Increasingly be a Team Sport

With all this talk of automation it is easy to be misled that professional data scientists will no longer be necessary. Nothing could be further from the truth. True, fewer of us will be required to solve problems which can be implemented much more quickly.

Where does this leave the Citizen Data Scientist? This is a movement that has quite a lot of momentum and it’s easy to understand that reasonably smart and motivated LOB managers and analysts may not only want to consume more data science but also want a hands-on seat at the table.

And indeed they should have a major role in defining the problem and implementing the solution. However, even with all the new automated features the underlying data science still requires an expert’s eye.

The new focus of your skills will be as a team leader, one with deep knowledge of the data science and the business domain.

How Fast Will All This Happen

The build out of advanced analytic platforms and automated features has been underway for about the last two years. I’m with Gartner on this one. I think roughly half our tasks will be automated within two years. Beyond that it’s about how fast this trickles down from the largest companies to the smaller ones. The speed and reduced cost that automation offers will be impossible to resist.

As for the absorption of the data science platform into the hidden middle layer of the stack as the System of Intelligence, you can already see this underway in many of the thousands of VC funded startups. This is fairly new and it will take time for these startups to scale and mature. However, don’t overlook the role that M&A will play in bringing these new platform concepts inside large existing players. This is probable and will only accelerate the trend.

Is hiding the data science from the end user in any way a bad thing? Not at all. Our contribution to the end user’s experience was never meant to be on direct display. This means more opportunities to apply our data science skills on more tightly focused groups of end users and create more delight in their experience.

About the author: Bill Vorhies is Editorial Director for Data Science Central and has practiced as a data scientist since 2001. He can be reached at: