Google Release Knative: A Kubernetes Framework to Build, Deploy, and Manage Serverless Workloads

MMS • RSS

Article originally posted on InfoQ. Visit InfoQ

At Google Cloud Next 2018 the release of Knative was announced as a “Kubernetes-based platform to build, deploy, and manage modern serverless workloads”. The open source framework attempts to codify the best practices around three areas of developing cloud native applications: building containers (and functions), serving (and dynamically scaling) workloads, and eventing. Knative has been developed by Google in close partnership with Pivotal, IBM, Red Hat, and SAP.

Knative (pronounced kay-nay-tiv) provides a set of middleware components that are “essential to build modern, source-centric, and container-based applications” that can run on premises, in the cloud, or a third-party data center. The framework is the open-source set of components from the same technology that enables the new GKE serverless add-on.

According to the Google Cloud Platform blog post, “Bringing the best of serverless to you“, Knative focuses on the common tasks of building and running applications on cloud native platforms, such as orchestrating source-to-container builds, binding services to event ecosystems, routing and managing traffic during deployment, and auto-scaling workloads. The framework provides engineers with “familiar, idiomatic language support and standardized patterns you need to deploy any workload, whether it’s a traditional application, function, or container.”

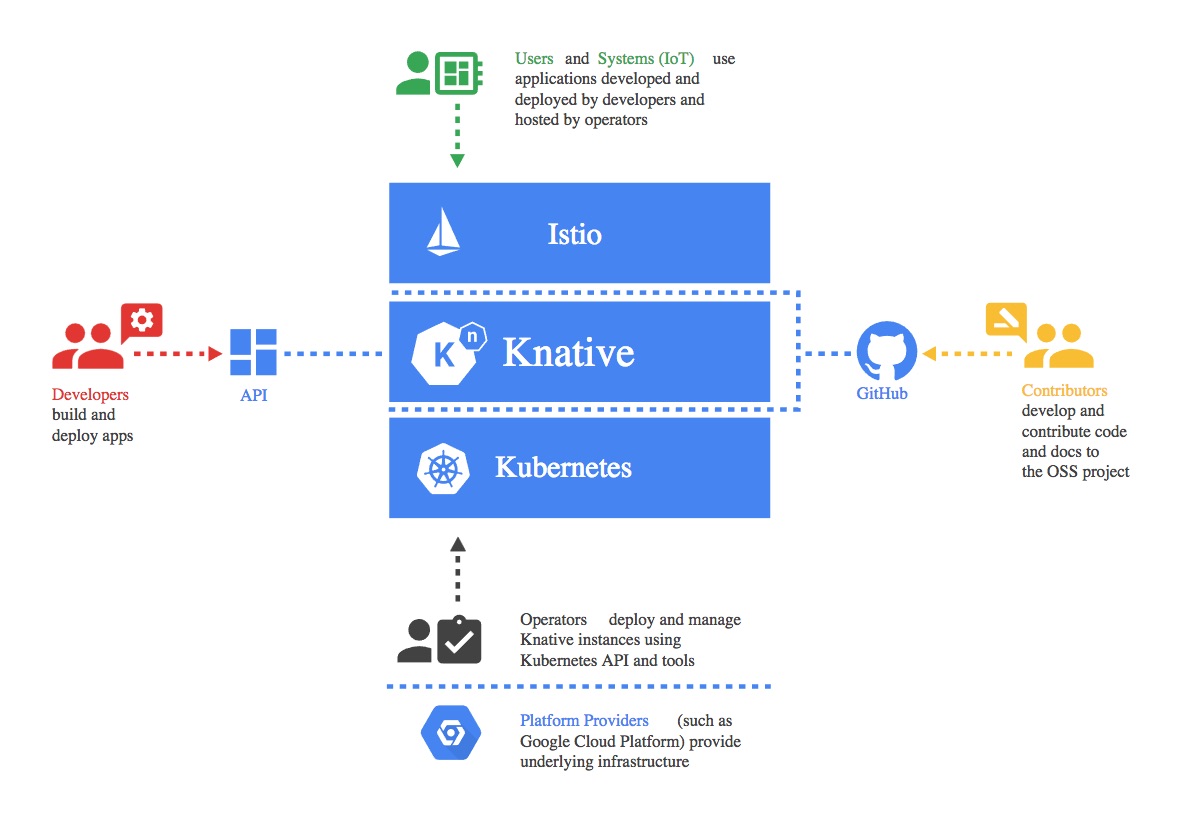

Knative is built upon Kubernetes and Istio, an open platform to connect and secure microservices (effectively a service mesh control plane to the Envoy proxy), and has been designed to take account of multiple personas interacting with the framework, including developers, operators and platform providers.

The personas involved with Knative (image taken from the Knative GitHub repo)

The personas involved with Knative (image taken from the Knative GitHub repo)

Knative aims to provide reusable implementations of “common patterns and codified best practices”, and the following components are currently available:

- Build – Source-to-container build orchestration

- Eventing – Management and delivery of events

- Serving – Request-driven compute that can scale to zero

The Knative build component extends Kubernetes and utilises existing primitives to provide an engineer with the ability to run on-cluster container builds from source (based on the previously released Kaniko). The goal is to use Kubernetes-native resources to obtain source code from a repository, build this into a container image, and then run that image. However, the documentation states that currently the end user of the framework is still responsible for developing the corresponding components that perform the majority of these functions.

While today, a Knative build does not provide a complete standalone CI/CD solution, it does however, provide a lower-level building block that was purposefully designed to enable integration and utilization in larger systems.

The eventing system has been designed to address a series of common needs for cloud native software development: services are loosely coupled during development and deployed independently; a producer can generate events before a consumer is listening, and a consumer can express an interest in an event or class of events that is not yet being produced; and services can be connected to create new applications without modifying producer or consumer, and with the ability to select a specific subset of events from a particular producer.

The documentation states that these design goals are consistent with the design goals of CloudEvents, a common specification for cross-service interoperability being developed by the CNCF Serverless WG. Documentation within the Eventing repo makes it clear that this “very much a work-in-progress”, and there are a list of known issues with the current state.

The Knative Serving documentation states that this part of the framework builds on Kubernetes and Istio to support deploying and serving of serverless applications and functions. The goal is to provide middleware primitives that enable: rapid deployment of serverless containers; automatic scaling up and down to zero; routing and network programming for Istio components; and point-in-time snapshots of deployed code and configurations.

Joe Beda, Founder and CTO at Heptio, noted on Twitter the potential benefits of the serving component:

One of the more interesting parts of KNative is “scale to zero”. This is done by routing requests to an “actuator” that holds requests, scales up backend and then forwards. I’ve been waiting for someone to build this.

The Twitter thread continues with his observation that the serving component is manipulating Istio rules directly, and that having multiple “owners” of these rules is “interesting and it moves Istio to an implementation detail, to some degree.” Others, including Gabe Monroy, Lead PM of Containers at Azure were skeptical of the integration of Istio into the framework:

My biggest concern about Knative is the dependency on Istio. Was that really necessary?

Oren Teich, Director of Product Management at Google, provided more context around the release of Knative in a series of Tweets. He began by stating that the Google team see serverless as driving two key shifts in software development: the operational model, and the programming model. The serverless operational model is about pay for what you use, scaling, security patches, and no maintenance. The serverless programming model is about source-driven deploys, microservices, reusable primitives, and event-driven/reactive models. Teich then expanded on the role Knative aims to play:

Knative is infrastructure to allow the programming models to run on any operational model. Sure, now you’re managing K8S (or GKE or whatever), but you can program in the same model.

As you can see from the code base (http://github.com/knative ) it’s just getting started. The three primitives today allow source -> container, container execution, and event->execution bindings.

He also noted that although there currently “isn’t a great” developer experience, Knative is “the infrastructure to build incredible serverless products, and ensure portability in the programming model between them”, and that the Google are investing in addressing this.

The Pivotal team are also large contributors to Knative, and their blog post, “Knative: Powerful Building Blocks For a Portable Function Platform“, describes how their existing serverless framework Project Riff is being re-architected to leverage Knative:

Our team committed a number of full-time employees to improve the Serving project within Knative, which runs the dynamic workloads. We took the thoughtful eventing model from project riff and helped embed that in Knative too. And we contributed to the Build project, including the addition of Cloud Foundry Buildpack support. The first non-Google pull request came from us, and our ongoing investment conveys our belief that this initiative matters.

Additional information about Knative can be found in the project’s GitHub repository, and details of how to get involved with the community can also be found here.