NVIDIA Announces RAPIDS, Medical Image Application, and a Driving Simulator for Autonomous Vehicles.

MMS • RSS

Article originally posted on InfoQ. Visit InfoQ

Jensen Huang, CEO of NVIDIA, gave a keynote at the GPU Technology Conference 2018 in Munich. He announced RAPIDS, an open-source CUDA accelerated toolkit that can help data scientists to faster process their data. They also announced a partnership with King’s collece in London to work on medical imaging applications. They announced a self-driving car simulator that car manufacturers can use for verification of autonomous vehicles.

RAPIDS

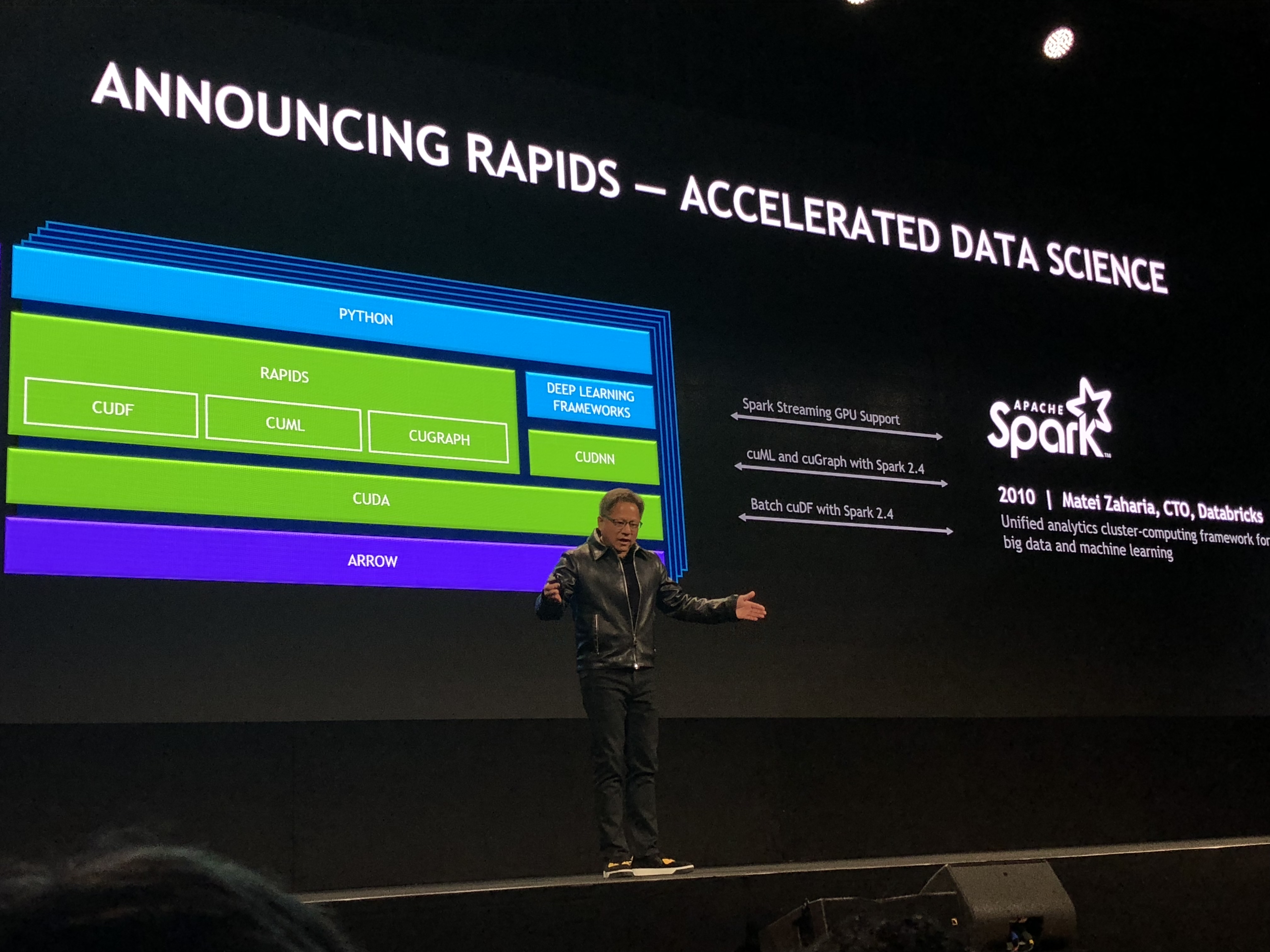

Nvidia announced Rapids: an open-source Software the incorporates GPU technology acceleration in the data science pipeline. It builds on the classical machine learning pipeline many data scientists are using: Python programs made with Numpy, pandas, and scikit-learn. Rapids can read data with multiple Cuda cores, and run both learning and inference on multiple Cuda cores. RAPIDS will be added to Spark in version 2.4, with streaming GPU support, cuML and cuGraph and batch cuDF. To make sure their data science platform is usable for companies NVIDIA collaborated with several large companies, such as Oracle, IBM, and Wallmart. You can find more information on NVIDIA’s blog.

NVIDIA for medical applications

NVIDIA is also entering the medical market, where they used their Clara AGX platform for the radiology market. They created an Image recognition algorithm powered by neural networks for visualization of radiology images. The computer here needs to run a medical imaging pipeline: which requires taking in sensor data, processing it, and visualizing it. You can find more information on NVIDIAs blog.

NVIDIAs platform for for autonomous vehicles

Jensen Huang announced the NVIDIA AGX, which is a computer meant for autonomous machines. It is a board with a Xavier processing, and has a high speed IO of 109GB per seconds. As the computer of an autonomous machine (either car or robot) is different from other applications. Platforms in the real world need to run in real-time, and have to be reliable.

With Munich being home to multiple large vehicle manufacturers, GTC Europe is used to announce progress in NVIDIAs effort to become producer of hardware for autonomous vehicles. Last year they already announced the Pegasus platform, which will be released in 2019. In addition the compute hardware they also talked about the drive works SDK, which contains many algorithms that can be used for autonomous driving. The Drive IX is available now, and the Drive AGX Xavier development kit is also available now. Volvo announced that they will use the Drive AGX platform to pilot their consumer vehicles that will have level 2 self-driving functions. Level 2 means that the car will have advanced features such as adaptive cruise control and lane-keeping, but that the driver will be in the loop to monitor the cars performance.

NVIDIA also announced a simulator for autonomous vehicles: drive constellation. It combines two computers, where one computer renders the environment which the other computer uses to make decisions about what action to take. This simulator can be used to verify autonomous vehicle functions, and could even be used to create a virtual self-driving car license computers need to pass to be allowed to drive on the public road. More information can be found on the NVIDIA blog.

Tesla T4 and DGX2

Jensen Huang also showed the Tesla T4, which was already announced on a previous GTC on September 18. It is a multi precision TensorCore accelerator, which can run inference in float32, int8, and int4. It can do 5.5 trillion operations per second. Jensen also talked about the DGX2, which they announced in March 2018. This platform consists of 16 Tesla V100 32GB GPUS connected on one board. They are connected with 12 NVSwitches. It has 1.5TB of system memory, and can process up to 2 Petaflops per second. There is also 32TB of SSDs for data storage. KubeFlow As most companies are working with multipe servers in a cloud setting NVIDIA announced KubeFlow. By running a TensorRT inference server you can deploy your neural network inference to a data center. It can distribute the load of a neural network inference over multiple servers. At the moment companies often have multiple pods, with each pod running on dedicated model for inference. Unfortunately, if the demand for 1 specific model goes up you have to scale servers to meet the demand for this functionality. TensorRT with Kubernetes makes it possible to distribute the workload with multiple models on multiple servers. Kubernetes figures out where the workload is in the data centers and puts the models on servers with leftover capacity.