MMS • RSS

Article originally posted on InfoQ. Visit InfoQ

Two-and-a-half months after the release of version 1.5, Pivotal has released version 1.6 of Spring Cloud Data Flow (SCDF), a project for building and orchestrating real-time data processing pipelines to runtimes, such as the Pivotal Cloud Foundry (PCF), Kubernetes and Apache Mesos, with new features including:

- A new task scheduler for PCF

- Dashboard improvements

- Kubernetes support enhancements

- A new app hosting tool and local repository

We examine a few of the new features here.

Getting Started

As with the previous version of SCDF, this quick start guide demonstrates how to initiate this new version using the docker-compose utility:

DATAFLOW_VERSION=1.6.1.RELEASE docker-compose up

Once established, the SCDF dashboard can be accessed locally via http://localhost:9393/dashboard/.

Task Scheduling

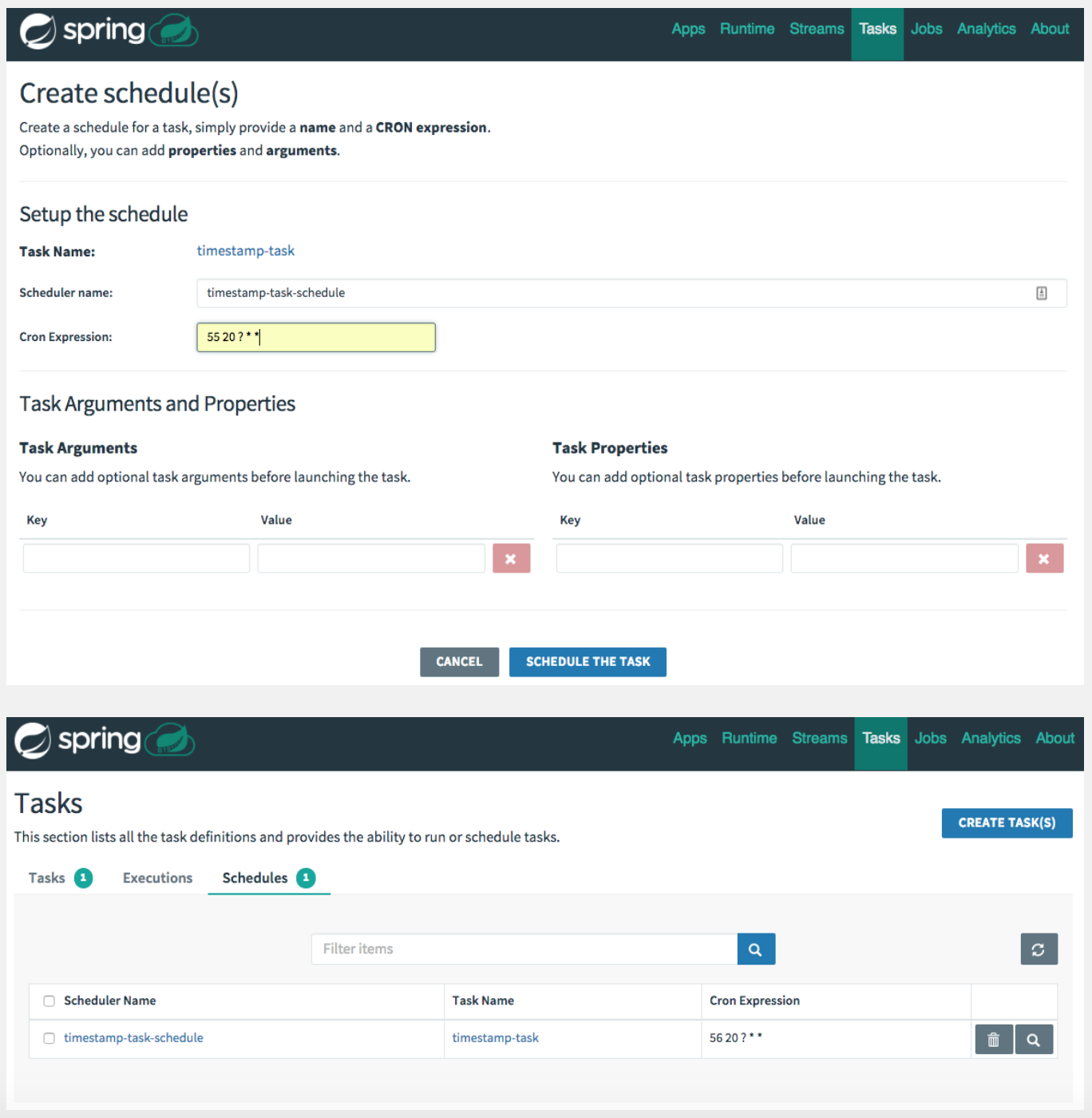

With the introduction of a native PCF Scheduler integration into the SCDF for Cloud Foundry, task definitions can be scheduled and unscheduled with SCDF via a cron expression. The PCF Scheduler is a service that handles tasks such as database migrations and HTTP calls. Developers can create, run, and schedule jobs along with viewing the history. The scheduler can be accessed via the Tasks menu in the SCDF dashboard.

SCDF App Hosting Tool

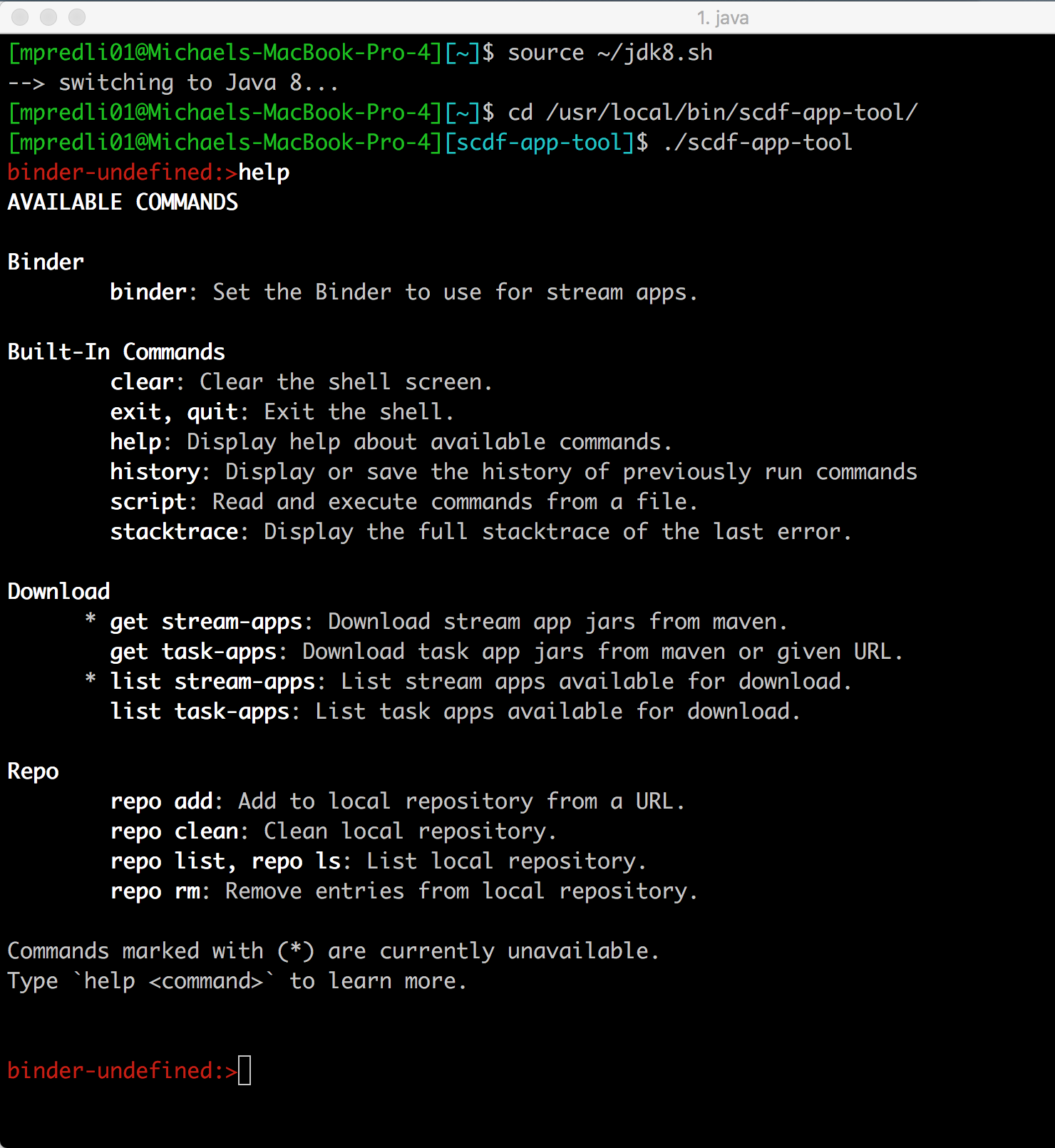

Also new for version 1.6 is the SCDF App Hosting Tool, built with Spring Shell, designed to build and maintain a standalone SCDF App Repository on a local network for developers running SCDF behind a firewall, for example, with no access to the Spring Maven Repository. The App Hosting Tool, scdf-app-tool, can be built from source or by downloading the binaries.

Upon startup, the App Hosting Tool launches a REPL with an initial prompt, binder-undefined:>, to indicate that a stream app needs to defined. Shown below are the available commands for the tool when invoking the help command.

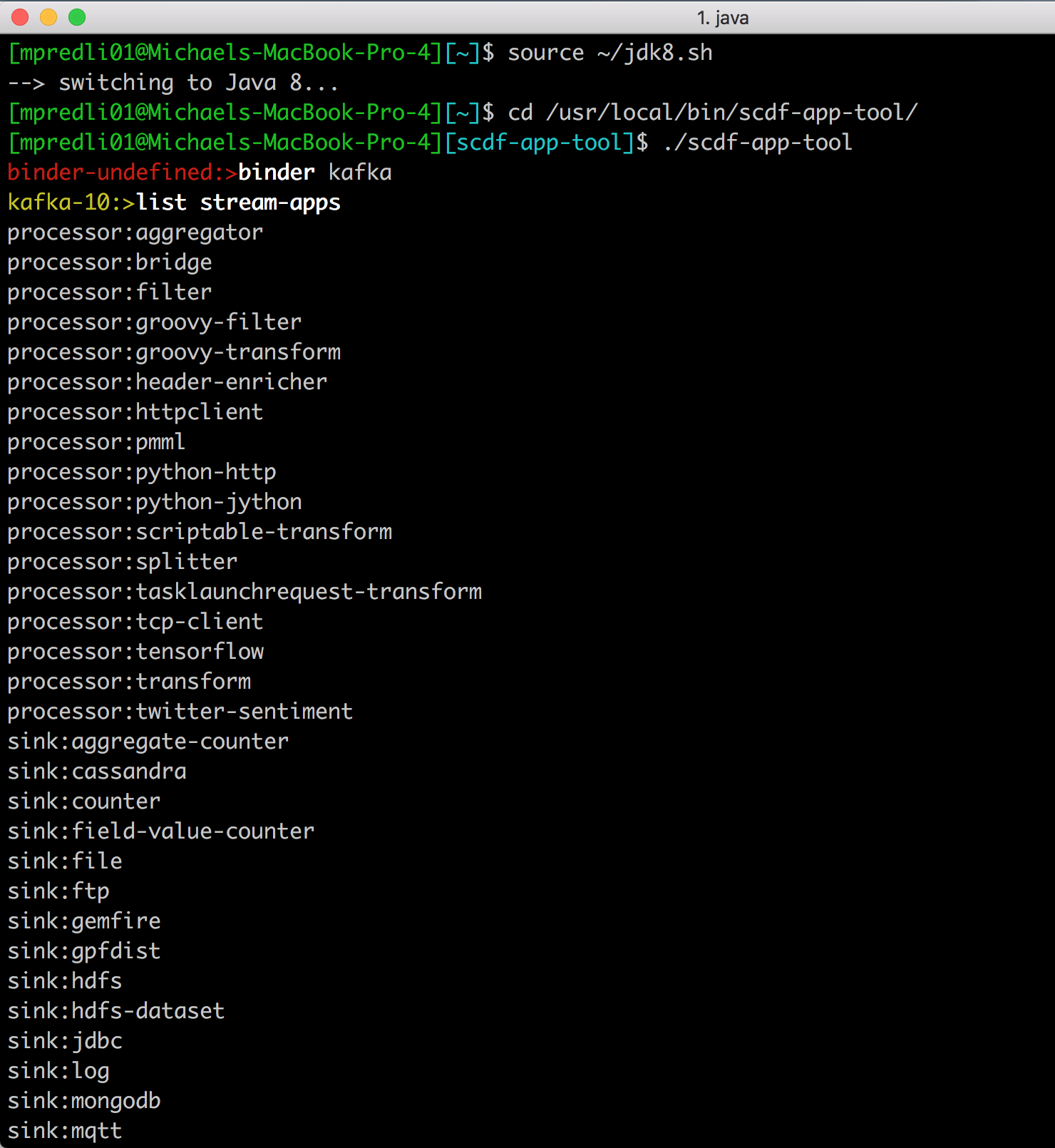

Note that the commands, get stream-apps and list stream-apps, are not available at this point. These commands become available once a stream app has been defined using the binder command as shown below. Supported binders are kafka, kafka-10, and rabbit, but it’s important to note that kafka parameter for the binder command is an alias for kafka-10.

Note the prompt changes to kafka-10:> at which point the get stream-apps and list stream-apps commands may be called.

SCDF App Repository

The SCDF App Repository, a Spring Boot application, is a web-based repository used to build stream and task applications to register and deploy applications without an external Maven repository. Located under the config directory of the scdf-app-tool, the App Repository, scdf-app-repo, can built as follows:

$ cd config/scdf-app-repo

$ ./mvnw clean package

The App Repository can be deployed to a cloud platform or run locally:

$ java -jar target/scdf-app-repo-0.0.1-SNAPSHOT.jar

Once running, the hosted artifacts on the repository can be accessed locally via http://localhost:8080/repo/.

Mark Pollack, senior staff engineer at Pivotal, spoke to InfoQ about this latest release.

InfoQ: What makes Spring Cloud Data Flow unique over other real-time data processing pipelines?

Pollack: Spring Cloud Data Flow is a lightweight Spring Boot application that provides a data integration toolkit to orchestrate the composition of Spring Cloud Stream (SCSt) and Spring Cloud Task (SCT) microservice applications into coherent real-time streaming and batch data pipelines respectively. Real-time streaming applications can use a wide range of middleware products, such as Kafka KStreams, RabbitMQ, and Google Pub-Sub. The common denominator to all this is Spring Boot. From the programming model to testing, CI/CD, and operating these applications onto cloud platforms such as Cloud Foundry or Kubernetes is consistent.

While there are numerous streaming platforms and real-time analytics solutions in the market, architecturally, we are unique by the cloud-native approach to data processing workloads, where CI/CD for streaming applications is a first-class citizen. To that degree, the data processing logic (i.e., Spring Boot Apps) can react to real-time traffic patterns by auto-scaling or be rolling upgraded without disrupting the upstream or downstream data processing.

InfoQ: What’s on the horizon for Spring Cloud Data Flow?

Pollack: Spring Cloud Data Flow spans across several projects in the ecosystem with each of the projects having independent backlogs and release cadences.

Our primary focus is to improve the developer productivity when it comes to building the real-time streaming and batch data pipelines. While adding new feature improvements to streaming and batch programming models is top concerning priority, we also keep a close tab on operational improvements in the respective cloud platforms such as Cloud Foundry or Kubernetes.

A few notable features:

- Continued investments on Kafka and Kafka Streams ecosystem to facilitate State Storages and Interactive Queries, and still keeping the cloud-native practices intact.

- Batch/Task workloads are typically scheduled based on a recurring cadence or a cronjob. Implementation of Cloud Foundry (via PCF Scheduler) and Kubernetes (via CronJob spec) is under development.

- Usability is a big focus. Numerous improvements to Dashboard ranging from orchestration mechanics, interactivity, and the overall look & feel.

- Security continues to be a top priority for many of our customers, so is for our team, too. Additional security capabilities for Streams launching Tasks, and as well, the support for Composed Tasks feature is targeted in the upcoming releases. There is also an audit trail feature that is going to be released in the next version to answer the question “Who did what and when?”

- We are actively also spiking the design of next generation of data processing workloads. Our foundation revolves around Spring Boot uber-jars today, and it could be plain-old simple Function jars tomorrow. Spring Cloud Stream, Spring Cloud Function, and Spring Cloud Stream App Starters are all reevaluated from the overall goals, design, and architecture perspectives.

- Spring Cloud Data Flow for PCF, in particular, automates the provisioning aspects in Cloud Foundry along with a profound security model for end-to-end SSO. There’s continued interest to optimize the tile to enhance the developer and operator interactions with SCDF further.

InfoQ: What are your current responsibilities, that is, what do you do on a day-to-day basis?

Pollack: As the team lead, I do a bit of everything. I help define the roadmap, do some feature development, maintenance, acceptance testing, release management, and customer support. I am also trying to beat my high score in Robotron.