MMS • RSS

Article originally posted on Data Science Central. Visit Data Science Central

Summary: Advanced analytic platform developers, cloud providers, and the popular press are promoting the idea that everything we do in data science is AI. That may be good for messaging but it’s misleading to the folks who are asking us for AI solutions and makes our life all the more difficult.

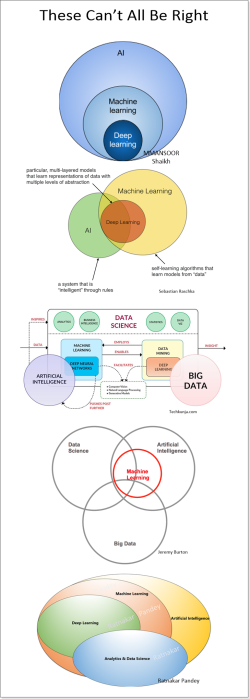

Arrgh! Houston we have (another) problem. It’s the definition of Artificial Intelligence (AI) and specifically what’s included and what isn’t. The problem becomes especially severe if you know something about the field (i.e. you are a data scientist) and you are talking to anyone else who has read an article, blog, or comic book that has talked about AI. Sources outside of our field, and a surprising number written by people who say they are knowledgable are all over the place on what’s inside the AI box and what’s outside.

Arrgh! Houston we have (another) problem. It’s the definition of Artificial Intelligence (AI) and specifically what’s included and what isn’t. The problem becomes especially severe if you know something about the field (i.e. you are a data scientist) and you are talking to anyone else who has read an article, blog, or comic book that has talked about AI. Sources outside of our field, and a surprising number written by people who say they are knowledgable are all over the place on what’s inside the AI box and what’s outside.

This deep disconnect and failure to have a common definition wastes a ton of time as we have to first ask literally everyone we speak to “what exactly do you mean by that when you say you want an AI solution”.

Always willing to admit that perhaps the misperception is mine, I spent several days gathering definitions of AI from a wide variety of sources and trying to compare them.

When a Data Scientist Talks to Another Data Scientist

Here’s where I’m coming from. For the last 20 or so years we’ve been building a huge body of knowledge and expertise in machine learning, that is using a variety of supervised and unsurpervised techniques to find, exploit, and optimize actions based on patterns we are able to find in the data. When Hadoop and NoSQL came along, we extended those ML techniques to unstructured data, but that wasn’t yet AI as we know it today.

It was only after the introduction of two new toolsets that we have what data scientists call AI. Those are reinforcement learning and deep neural nets. These in turn have given us image, text, and speech applications, game play, self driving cars, and combination applications like IBMs Watson (the question answering machine not the new broad branding) that make it possible to ask human language questions of broad data sets like MRI images and have it determine if that is cancer or not cancer.

When a Data Scientist Talks to a Historian

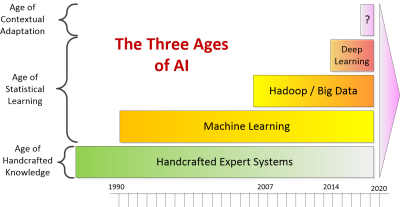

It is possible to take a much broader view of AI extending back even before the effective use of modern AI. This definition was first introduced to me by John Launchbury, the Director of DARPA’s Information Innovation Office. He expands our point of view to take in three ages of AI:

- The Age of Handcrafted Knowledge

- The Age of Statistical Learning

- The Age of Contextual Adaptation.

Launchbury’s explanation helped me greatly but while the metaphor of ‘ages’ is useful it creates a false impression that one age ends and the next begins as a kind of replacement. Instead I see this as a pyramid where what went before continues on and becomes a foundation for the next. This also explicitly means that even the oldest of AI technologies can still be both useful and are in fact still used. So the pyramid looks like this:

This definition even includes the handcrafted expert systems we built back in the 80s and 90s. Since I built some of those I can relate. This supports the ‘everything is AI’ point of view. Unfortunately this just takes too much time to explain and it’s not really what most people are thinking about when the say ‘AI’.

Definitions from Data Science Sources

Since there is no definitive central reference glossary for data science, I looked in the next best places, Wikipedia and Technopedia. These entries after all were written, reviewed, and edited by data scientists. Much abbreviated but I believe accurately reported, here are their definitions.

Wikipedia

- “Colloquially, the term “artificial intelligence” is applied when a machine mimics “cognitive” functions that humans associate with other human minds, such as “learning” and “problem solving”….Capabilities generally classified as AI as of 2017 include successfully understanding human speech, competing at a high level in strategic game systems (such as chess and Go), autonomous cars, …and interpreting complex data, including images and videos.”

OK, so far I liked the fairly narrow focus that agrees with reinforcement learning and deep neural nets. They should have stopped there because now, in describing tools for AI Wikipedia says:

- “In the course of 60 or so years of research, AI has developed a large number of tools to solve the most difficult problems in computer science,…neural network, kernel methods such as SVMs, K-nearest neighbor, …naïve Bayes…and decision trees.”

So Wikipedia has reduced all of the machine learning techniques we mastered over the last 20 years as the development of AI???

Technopedia

- “Artificial intelligence (AI) is an area of computer science that emphasizes the creation of intelligent machines that work and react like humans. Some of the activities computers with artificial intelligence are designed for include: speech recognition, learning, planning, problem solving….Artificial intelligence must have access to objects, categories, properties and relations between all of them to implement knowledge engineering.”

I was with them so far. They even acknowledged the training requirement of having labeled outcomes associated with inputs. Then they spoiled it.

- “Machine learning is another core part of AI. Learning without any kind of supervision requires an ability to identify patterns in streams of inputs, whereas learning with adequate supervision involves classification and numerical regressions. Mathematical analysis of machine learning algorithms and their performance is a well-defined branch of theoretical computer science often referred to as computational learning theory.”

So apparently there is no such thing as unsupervised machine learning outside of AI which includes all of ML? No association rules, no clustering? No one seems to have reminded these authors that CNNs and RNN/LSTMs require identified outputs to train (the definition of supervised which they acknowledged in the first paragraph and contradicted in the second). And while some CNNs may be featureless, the most popular and wide spread application, facial recognition, relies on hundreds of predefined features on each face to perform its task.

It also appears that what we have been practicing over the last 20 years in predictive analytics and optimization is simply “computational learning theory”.

Major Thought Leading Business Journals and Organizations

Results were mixed. This survey article published in the MIT Sloan Journal in collaboration with BCG looked at ‘Reshaping Business with Artificial Intelligence’ involving a global survey of more than 3,000 executives.

- “For the purpose of our survey, we used the definition of artificial intelligence from the Oxford Dictionary: “AI is the theory and development of computer systems able to perform tasks normally requiring human intelligence, such as visual perception, speech recognition, decision-making, and translation between languages.” However, AI is evolving rapidly, as is the understanding and definition of the term.”

Their definition seemed reasonably constrained and beyond image and text recognition, at least one example involved a Watson-like question answering maching (QAM). I was a little worried by the inclusion of ‘decision making’ since that’s exactly what predictive and prescriptive analytics are intended to do. Did their survey respondents really understand the difference?

The Stanford 2017 AI Index Report is a great resource and an attempt to offer a great deal of insight into the state of AI today. However, its definition especially with respect to Machine Learning seems to ignore history.

- “ML is a subfield of AI. We highlight ML courses because of their rapid enrollment growth and because ML techniques are critical to many recent AI achievements.”

Of course the Harvard Business Review does not speak with one voice but this article was at the top of the Google search for definitions of AI. Entitled “The Business of Aritificial Intelligence” by Brynjolfsson and McAfee it offers this unusual defintion:

- “The most important general-purpose technology of our era is artificial intelligence, particularly machine learning (ML) — that is, the machine’s ability to keep improving its performance without humans having to explain exactly how to accomplish all the tasks it’s given. …Artificial intelligence and machine learning come in many flavors, but most of the successes in recent years have been in one category: supervised learning systems, in which the machine is given lots of examples of the correct answer to a particular problem. This process almost always involves mapping from a set of inputs, X, to a set of outputs, Y.”

So once again, apparantly everything we’ve doing over the last 20 years is AI? And the most successful portion of AI has been supervised learning? I’m conflicted. I agree with some of that; not the part that we’ve practicing AI all along. I’m just not sure these authors really understand either. Nor would this be particularly helpful explaining AI to a client or your boss.

Definitions by Major Platform Developers

By now I was getting discouraged and figured I knew what I would find when I started to look at the blogs and promotional literature created by the major platforms. After all, from their perspective, if everyone wants AI then let’s tell them that everything is AI. Problem solved.

And that is indeed what I found for the major platforms. Even when you get down to the newer specialized AI platforms (e.g. H2O ai) they emphasize their ability to serve all needs, deep learning and traditional predictive analytics. It’s one of the major trends in advanced analytic platforms to become as broad as possible to capture as many data science users as possible.

Let’s Get Back to the Founders of AI

How about if we go back to the founding fathers who originally envisioned AI. What did they say? Curiously they took a completely different tack, not by defining the data science, but by telling us how we’d recognize AI when we finally got it.

Alan Turing (1950): Can a computer convince a human they’re communicating with another human.

Nils Nilsson (2005): The Employment Test – when a robot can completely automate economically important jobs.

Steve Wozniak (2007): Build a robot that could walk into an unfamiliar house and make a cup of coffee.

Ben Goertzel (2012): When a robot can enroll in a human university and take classes in the same way as humans, and get its degree.

It’s also interesting that they couched their descriptions in terms of what a robot must do, recognizing that robust AI must be able to interact with humans.

See: this is still and video image recognition.

See: this is still and video image recognition.

Hear: receive input via text or spoken language.

Speak: respond meaningfully to our input either in the same language or even a foreign language.

Make human-like decisions: Offer advice or new knowledge.

Learn: change its behavior based on changes in its environment.

Move: and manipulate physical objects.

You can immediately begin to see that many of the commercial applications of AI that are emerging today require only a few of these capabilities. But individually these capabilities are represented by deep learning and reinforcement learning.

And In the End

I don’t want to turn this into a crusade, but personally I’m still on the page with the founding fathers. That when someone asks us about an AI solution they are talking about the ‘modern AI’ features ascribed to our robot above.

Realistically, the folks that are bringing AI to market including all of the major advanced analytic platform providers, cloud providers, plus the popular press are shouting us down. Their volume of communication is just so much greater than ours. It’s likely that our uneducated clients and bosses will hear the ‘everything is AI’ message. That however, will not solve their confusion. We’ll still have to ask, “Well what exactly do you mean by that?”

About the author: Bill Vorhies is Editorial Director for Data Science Central and has practiced as a data scientist since 2001. He can be reached at: