Transcript

Allen: I’ll be talking about the platform engineering journey, from copy and paste deployments to full GitOps. The goal of this session is to share some lessons I’ve learned during my career and hopefully give you some solutions to the challenges you’re already facing or may face in the future. The key learnings I want you to take away are, technology moves quickly and it can be really difficult and time-consuming to keep up with the latest innovations. I’ll share some practical strategies that will hopefully help make things easier. Secondly, automation will always save you time in the long run, even if it takes a bit more time in the short term. Thirdly, planning short and long-term responsibilities for a project as early as possible can save everyone a lot of headaches. Finally, a psychologically safe working environment benefits everyone.

Professional Journey

I’ve always loved technology. My first home computer was an Amstrad, one of those great big things that takes up the whole desk. We didn’t have home internet connection at the time. Games were on floppy disks that were actually still floppy and took around 10 minutes to load, if you were lucky. When I was a bit older, technology started to advance quickly. The Y2K bug took over headlines. Dial-up internet became mainstream. I bought my first mobile phone, which was pretty much indestructible. Certainly, better than my one today, which breaks all the time. I followed my passion for technology. I did a degree in software engineering, and a few years into my career, a PgCert in advanced information systems. After I graduated, I started working as a web developer for a range of small media companies. I was a project manager and developer for an EU-funded automatic multilingual subtitling project, which is a pretty interesting first graduate job.

Then, as a web developer, building websites for companies like Sony, Ben & Jerry’s, Glenlivet. It involved a range of responsibilities, so Linux, Windows Server, and database administration, building HTML pages and Photoshop designs, writing both front and backend code, and some project management thrown into the mix. As a junior developer, I did say at least a few times, it works for me, so that must be a problem with something that operations manage, so I didn’t care about it. Now, of course, I know better. After a few years, around the time DevOps became popular, I started to work in larger enterprise companies as a DevOps engineer, as it was called then. Sometimes it was focused on automation and sometimes on software development. I moved into a senior engineering role and then a technical lead.

Then I moved to infrastructure architecture for a bit and then back to a tech lead again, where I am now. After two kids and many different tools, tech stacks, and projects later, I support a centralized platform and product teams by developing self-service tooling and reusable components.

A Hyper-Connected World

We’re in 2024. It’s a hyper-connected world. These days, we can provision hundreds or even thousands of cloud resources anywhere in the world, with the main barrier being cost. Today, around 66% of the world have internet access. We can contact anyone who’s connected 24 hours a day, 7 days a week. We can contact family and friends at the tap of a button. We can access work email anytime, day or night, which can be a good or a bad thing, depending on if you’re on call. We can get always instant notifications about things happening around the world and can livestream events, for example, the eclipse. We can ask a question and receive a huge range of answers based on real-time information. We really are in a hyper-connected world.

Looking Back

Let’s take a quick step back to the 1980s. In 1984, the internet had around 1,000 devices that were mainly used by universities and large companies. This was the time before home computers were commonplace, and most people had to be at a bookshop or library if they wanted information for a school project or a particular topic. To set the context, here’s a video from a Tomorrow’s World episode in 1984, demonstrating how to send an email from home.

Speaker 1: “Yes, it’s very simple, really. The telephone is connected to the telephone network with a British telecom plug, and I simply remove the telephone jack from the telecom socket and plug it into this box here, the modem. I then take another wire from the modem and plug it in where the telephone was. I can then switch on the modem, and we’re ready to go. The computer is asking me if I want to log on, and it’s now telling me to phone up the main Prestel computer, which I’ll now do”.

Speaker 2: “It is a very simple connection to make”.

Speaker 1: “Extremely simple. I can actually leave the modem plugged in once it’s done that, without affecting the telephone. I’m now waiting for the computer to answer me”.

Allen: I’m certainly glad it’s a lot easier to send an email nowadays. By 1992, the internet had 1 million devices, and now in 2024, there are over 17 billion.

Technology Evolves Quickly

That leads into the first key learning, technology moves quickly. We all lead busy lives, and we use technology both inside and outside of work. In a personal context, technology can make our day-to-day lives a lot easier. For example, we saw a huge rise in video conferencing for personal calls during the COVID lockdowns. In a work context, we need to know what the latest advancements are and whether they’re going to be relevant and useful to us and our employer. Of course, there are key touch points like here at QCon, where you can hear some great talks about the latest innovations and discuss solutions to everyday problems. There are more technology-specific ones like AWS Summit, Google IO, and one of the many Microsoft events. Then there are lots of good blogs and other great online content. At a company level, you’ve got general day-to-day knowledge sharing with colleagues and hackathons, which can be really valuable.

With so much information, how can we quickly and effectively find the details we need to do our jobs? One tool that I found is a really useful discussion point at work are Tech Radars. They can really help with keeping up with the popularity of different technologies. Tech Radars is an idea first put forward by Darren Smith at Thoughtworks. They’re essentially a state-stage review of techniques, tools, platforms, frameworks, and languages. There are four rings. Adopt, technology that should be adopted because they can provide significant benefits. Trial, technology that should be tried out to assess their potential. Assess, technology that needs evaluation before use. Hold, technology that should be avoided or decommissioned. Thoughtworks release updated Tech Radars twice a year, giving an overview of the latest technologies, including details, and whether any existing technologies have moved to a different ring.

I found these can also be really useful at a company or department level to help developers choose the best tools for new development projects. I’ve personally seen them work quite well in companies as they help to keep everyone moving in the same technical direction and using similar tooling. Let’s face it, no one wants to implement a new service only to find that it uses a tool that’s due to be decommissioned. It will cost the business money funding the migration, and all the work to integrate the legacy tool will be lost. Luckily, creating a basic technical piece of concept for the Tech Radar is quite easy. There are some existing tools you can use as a starting point.

For example, Zalando have an open-source GitHub repository you can use to get up and running. Or if you use a developer portal like Backstage, there’s a Tech Radar plugin for that as well. One of the key benefits about Tech Radar is it’s codified, unlike a static diagram, which can get old quite quickly. Things like automated processes, user contributions, and suggestions for new items can be easily integrated. Any approval mechanisms that are needed can also be added quite easily.

Let’s take a general example. You’re in a big company, and you’re selecting an infrastructure as code tool for a new project to provision some AWS cloud infrastructure. Your department normally uses Terraform. Terraform’s probably a choice. There are plenty of examples in the company, some InnerSource modules. You know you can write the code in a few days. Looking at the other options, Chef is good, but it’s not really used very much in the departments at the moment. As the company tends to move towards more cloud-agnostic technology, they don’t really use CloudFormation. Lately, you’ve heard that some teams have been trying out Pulumi, and some have tried out the Terraform CDK. You look at the company Tech Radar, and see that Pulumi is under trial, and Terraform is under assess.

As a project has tight timelines, you know you need a tool that’s well integrated with company tooling. While it might be worth confirming that Pulumi is still in the assess stage, after that, you probably want to focus any remaining investigation time on checking if there’s any benefit to trialing the Terraform CDK. If you can’t find any benefit to that, then it probably goes with standard Terraform, because it’s the easiest option. You know it integrates with everything already, even if it’s not particularly innovative. Of course, if the project didn’t have those time constraints, then you can spend more time investigating whether there’s actually any benefit to using the newer tooling, the Terraform CDK or Pulumi, and then putting a business case forward to use those.

Another strategy that I found to be quite useful in adopting new tools within an organization is InnerSource. It’s something that’s been gaining popularity recently. It’s a term coined by Timothy O’Reilly, the founder of O’Reilly Media. InnerSource is a concept of using open-source software development practices within an organization to improve software development, collaboration, and communication. More practically, InnerSource helps to share reusable components and development effort within a company. This is something that can be really well suited to larger enterprise organizations or those with multiple departments. What are the benefits of InnerSource? You don’t need to start from scratch. You can use existing components that have already been developed internally for the company, which means less work for you, which is always a win. InnerSource components can also be really useful if a company has specific logic.

For example, if all resources of a certain type need to be tagged with specific labels for reporting purposes. It’s an easy way of making sure all resources are compliant, and changes can be applied in a single place and then propagated to all areas that use the code. If you find suitable components that meet, for example, 80% of requirements, then you can spend your development time building the extra 20% functionality, and then contributing that back to the main component for other people to use in the future and also for yourself to use in the future. What are the challenges? If pull requests take a long time to be merged back to the main InnerSource code, it can then mean that you have multiple copies of the original InnerSource code in your repo or in the branch. You then need to go back and update your code to point to the main InnerSource branch once your PR has been merged.

In reality, I’ve seen that InnerSource code that’s been copied across can end up staying around for quite a long time because people forget to go back and repoint to the main InnerSource repo. Also making sure that InnerSource projects have active maintainers, that can help solve the issue. One other problem is not having shared alignment on the architecture of components. Should the new functionality be added to the existing components, or is it best to create a whole new component for it? Having alignment on things like these can make the whole process a lot easier.

Automation

Moving on to another key learning. Automation will almost always save you time and effort in the long run, even if it takes a bit more effort initially. Running through the three key terms, there’s continuous integration, the regular merging of code changes into a code repository, triggering automated builds and tests. Continuous delivery, the automated release of code that’s passed the build and test stages of the CI step. Then continuous deployment, the automated deployment of release code to a production environment with no manual intervention. Going back to the topic of copy and paste deployments. Here’s an example of a deployment pipeline in somewhere I worked a few years ago. Developers, me included, had a desktop which had file shares for each of the different environments, so dev, test, and prod, which links the servers in the different environments. Changes are tracked in a normal project management tool, think something like Jira.

Code was committed to a local checkout subversion, and unit tests were run locally, but there was no status check to make sure it had actually been run. Deployments involved copying code from the local desktop into the directory, and then it would go up to the server. As I’m sure you can see, there are a lot of downsides to this deployment method. Sometimes there are problems with the file share, and not all files were copied across at the same time, meaning the environment was out of sync. Sometimes, because of human error, not all of the files were copied across to the new environment. If tests were run locally and there were no status checks, then changes could be deployed that hadn’t been fully tested.

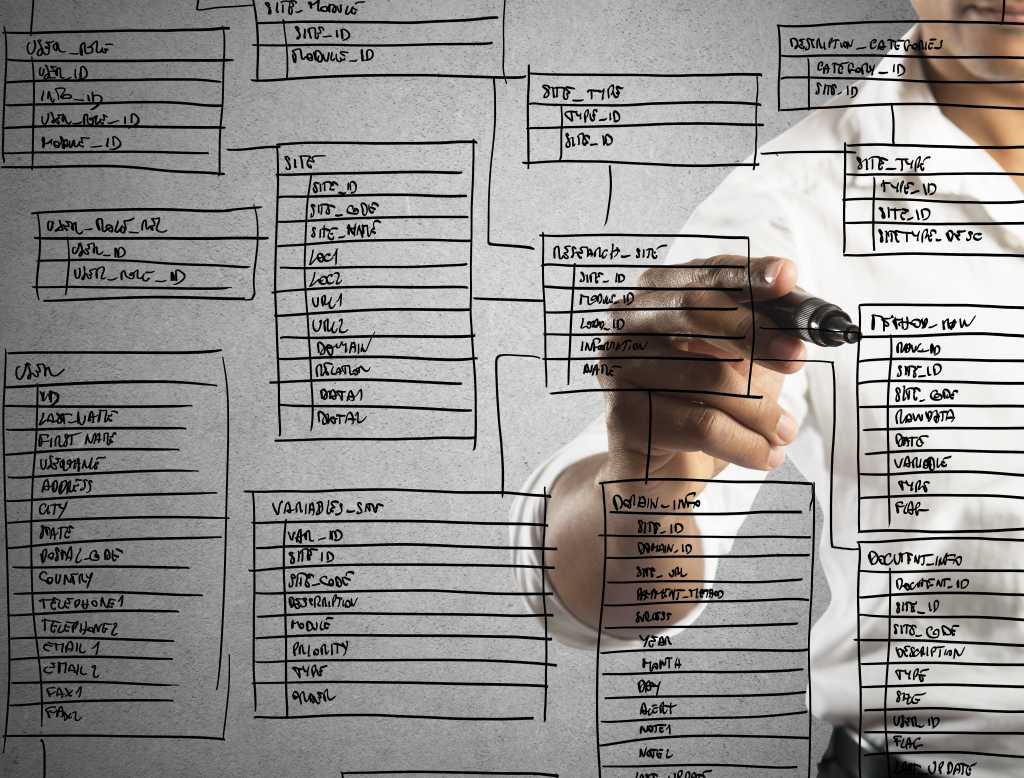

Another issue that we had quite a few times was that code changes needed to align with database changes. I know it’s still a problem nowadays, but especially with this method, the running code could be incompatible with the database schema, so trying to update both at the same time didn’t work, and you ended up with failed requests from the user.

Given all these downsides, and as automation was starting to become popular, we decided to move to a more automated development approach. There are many CI/CD tools available: GitLab, GitHub Actions, GoCD. In this case, we used Jenkins. Even after automation, we didn’t have full continuous deployment, as production deployment still required a manual click to trigger the Jenkins pipeline. I’ve seen that quite a lot in larger companies and in services that need high availability because they still need that human in the mix to trigger the deployment.

The main benefit of using automation, as is probably quite clear, deploying code from version control instead of a developer’s local checkout reduced mistakes. As the full deployment process was triggered from a centralized place, Jenkins, at the click of a button, any local desktop inconsistencies were removed completely, which made everyone’s life a lot easier. Traceability of the deployments as well was a lot easier, as obviously you can see in Jenkins everything that’s happened, that it’s easier to identify the root cause of any issues. Obviously, in case of Jenkins, and also any other tool, there are quite a few integrations that you can use to integrate with your other tooling.

Moving on to another automated approach, let’s look at GitOps for infrastructure automation. This was first coined by Alexis Richardson, the Weaveworks CEO. GitOps is a set of principles for operating and managing software systems. The four principles are, the desired system state must be defined declaratively. The desired system state must be stored in a way that’s immutable and versioned. The desired system state is automatically pulled from source without any manual intervention.

Then, finally, continuous reconciliation. The system state is continuously monitored and reconciled to whatever’s stated in the code. CI/CD is important for application deployments, of course, but it’s something that can be overlooked, as it tends to change less often than the application code, or the underlying infrastructure deployments, which can be pretty easy to automate. It can be quite common, obviously, for legacy systems to actually not have that infrastructure automation in place anyway. There are lots of infrastructure as code tools already, generic ones like Terraform, Pulumi, Puppet, Ansible, or vendor-specific ones like Azure Resource Manager, Google Cloud Resource Manager, and AWS CloudFormation.

A tool that I’ve had some experience with is Terraform. I’m going to run through a very basic workflow to show you how easy it is to set up. It implements the GitOps strategy for provisioning infrastructure as code using Terraform Cloud to provision the AWS infrastructure from GitHub source code. I’ll show you the diagram. It’ll make it clearer. It’s something that could be used for new infrastructure or could be applied to existing infrastructure that isn’t already managed by code. Let’s go through an overview of the setup. It’s separated into two parts. The green components show the identity provider authentication setup between GitHub and Terraform Cloud, and AWS and Terraform Cloud. The purple components rely on the green components to provision the resources defined in Terraform in the GitHub repository.

I’ll show you some of the Terraform that can be used to configure the setup as well as screenshots from the console just to make it clearer. Let’s start by setting up the connection between Terraform Cloud and GitHub. This can be done using this Terraform resource because there is a Terraform Cloud provider as there is with many other tools as well. Unfortunately, this resource does require a GitHub personal access token, but it is still possible to automate, which is the point of this one. Here are the screenshots of the setup. This sets up the VCS connection between GitHub and Terraform Cloud. As you can see, there are multiple different options, GitHub, GitLab, Bitbucket, Azure, DevOps. This is the permission step to authorize the connection between Terraform Cloud and your GitHub repository. This can be set at a repository level or at a whole organizational level.

In terms of least privilege, it’s probably best to set it at the repository level if you can. This is an example of a Terraform Cloud workspace configuration, and then the link to the GitHub repo using the tfe_workspace resource. There are lots of other configuration options, and as always with infrastructure as code, it’s very powerful and easy to scale. You can create multiple workspaces all with the same configuration. This is a repository selection step to link to the new workspace. Only the repositories that you’ve authorized will appear. Once you’ve done that, it’s on to configuration. You’ve got the auto-apply settings. If you want to do fully continuous deployment, you can configure this to auto-apply whenever there’s a successful run. Then, what about the run triggers? You can get it to trigger a run whenever any changes are pushed to any file in the repo, or constrain it slightly, so restrict it to particular file paths or particular Git tags.

It’s so flexible that there are hundreds of different options that you can use, but, yes, the point here is that it’s easy to set up and configure to your use case. Then the PR configuration. Ticking this option will automatically trigger a Terraform plan every time a PR is created. Then the link to the Terraform plan is also in the GitHub PR, so it’s fully integrated. That’s the Terraform Cloud and GitHub connection setup. Moving on to Terraform Cloud and AWS connection. First, the identity provider authentication. This is the Terraform to get the Terraform Cloud TLS certificate and then set up the connection. This is a screenshot as well. Then you’ve got the IdP setup with the IAM role, allowing it to assume that role. That’s in here. Then you’ve got the Terraform Cloud AWS authentication saying what permissions Terraform Cloud has.

In here, it’s S3 anything, so it’s probably quite overly permissive, but it’s just showing the example. You can configure this to any AWS IAM policy that you like. That’s the screenshot. Now the AWS part has been configured. You just add the environment variables to Terraform Cloud to tell it which AWS role to assume and which authentication mode to use. That’s it.

To recap, the green components that have just been set up are the authentication between Terraform Cloud and GitHub and the auth between Terraform Cloud and AWS. This only needs to be done once for each combination of Terraform Cloud workspace, GitHub repository, and AWS account configuration. As we saw, to achieve full automation, it can also be done using Terraform, which means it’s scalable. However, at some point during the initial bootstrap, you do need to manually create a workspace to hold the state for that initial Terraform.

Let’s move on to the purple components. These are to provision the resources defined in Terraform. This is a very basic demo repo to create an S3 bucket in an AWS account. In a real-life scenario, it would also have the tests and any linting and validation too. The provider configuration is easy, because it’s already been set up. Then define some variables and the values. Then, of course, the main Terraform S3 bucket and two configuration options, there are many. Now that connection has been set up, what would we need to do to deploy? Create a new branch, commit the code, push to GitHub and create a PR, and that will trigger the plan. You can see what it’s going to provision in AWS. Once the PR has been approved and merged to the main branch, it will then trigger another plan.

If you set auto-apply, then Terraform apply will run automatically. Now that’s been configured, scaling this to manage a lot of resources is really easy. Say you want 30 S3 buckets instead of one, all the same configuration. Write the code, create a feature branch, create a PR, and then the automatic plan will run, and then merge it into main, and there you go. You’ve got 30 buckets: easy to manage, easy to configure. Any changes in the future, all you need to do is update the code. Same with EKS cluster or any other resource.

Why automate? It makes it easy to standardize and scale, as we saw in that example. It gives good visibility and traceability over infrastructure deployments. It means multiple people can work in the same repo, and everyone can see what’s going on. If you have the proper Git branch protection strategy in place, it means there’ll be no risk of a Terraform apply running using any outdated code. There’s also the option to import existing resources that have been created manually into your Terraform state using Terraform import. Because I’ve seen, especially with some legacy apps, at the very beginning, things were just created manually, but now they need to be standardized, and certain options, for example, should it be public, should it be private, now need to be set. Importing those into the Terraform state can help you align that. Just one caveat.

In the Terraform Cloud version, there’s a paid-for version of Terraform Cloud, and in that option, it also has drift detection, which is obviously another point for the GitOps side of things, but you do have to pay for that. This example used Terraform Cloud, GitHub, and AWS. Of course, there are many other tools out there with an equally rich set of integrations. They also tend to have code examples and step-by-step guides to make things easier.

To recap, continuous delivery or continuous deployment might depend on your organizational policy or general ways of working, especially for production. It’s also worth considering the different system components and which strategy is best for each. For example, it might be best to use continuous delivery for database provisioning, but still continuous deployment for application code. Are different strategies needed in different environments? For example, continuous deployment for dev, but staging and production actually need that manual step. There are lots of tools available: Jenkins, CircleCI, GitLab, GitHub Actions, and many more. You need to choose the best one for your use case. Is one of them on your company Tech Radar, any of the others being used in other teams in your department?

Setting Clear Responsibilities

Moving on to setting clear responsibilities. I’ll tell you a story about a small operations department that supported a number of product teams. This is a true story. On Monday afternoon, a product team deployed a change. Everything looks good, so everyone goes home. 2 a.m. on Tuesday, the operations team get an on-call alert that clears after a few minutes. 4 a.m., they get another alert that doesn’t clear. Unfortunately, there’s no runbook for this service, for how to resolve the issue, and the person on-call isn’t familiar with the application. They’ve got no way to fix the problem. They have to wait until five hours later at 9 a.m., when they can contact the product team to get them to take a look. Later that day, another team deployed a change. Everything looks good, they all go home. However, this time at 11 p.m., on-call gets an alert.

This time, the service does have a runbook, but unfortunately, none of the steps in the runbook work. They request help on Slack, but no one else is online. They call people whose phone numbers they have, but there’s no answer. They need to wait until people come online in the morning, around a good few hours later, to resolve it. Not a good week for on-call, and definitely not a pattern that can be sustained long-term.

What are some reliability solutions? In the previous scenario, out-of-hours site reliability was the responsibility of the operations team, while working hours site reliability was the responsibility of the product team. The Equal Experts playbook describes a few site reliability solutions. You build it, you run it, as you’ve heard quite a lot. The product team receives the alerts and is responsible for support. The other option is Operational Enablers, which is a helpdesk that hands over issues to a cross-functional operational team, or Ops run it. An operational bridge receives the alerts, hands over to Level 2 support, who can then hand over to Level 3 if required. Equal Experts advocates that you build it, you run it for digital systems, and Ops run it for foundational services in a hybrid operating model.

Then, how about delivery solutions? In the previous scenario, the product team delivered the end-to-end solution, but they weren’t responsible for incident response. Of course, different solutions might work for different use cases. A large company might have dedicated networking, DBA and incident management teams. For a smaller company, some of these roles or teams might be combined. This is a solution from the Equal Experts playbook. You’ve got, you build it, you run it. The product team is responsible for application build, testing, deployment, and incident response, or Ops run it. The product team is responsible for application build and testing, before handing over to an operations team for change management approval and release.

Applying the you build it, you run it model to the original example, the product team would be the ones who’d be responsible for incident response, which means they might not have deployed at 4 p.m., because they didn’t want to be woken up at night. Also, if they were on-call, they could probably have solved it a lot more quickly because they know their application.

Just to recap, all services should have runbooks before reaching production. This means anyone with the required access can help support the application if needed. Runbooks should be regularly reviewed, and any changes to the application, infrastructure, or running environment should be updated in the runbook. If possible, it can be worth setting up multiple levels of support. Level 1 support can look at the runbook. If they can’t deal with it, they can then hand over to a subject matter expert who can hopefully resolve it more quickly. Monitoring, alerting should be designed during the development process. Alerts can be tested in each environment. You test it in dev, makes it a lot easier when you get to staging, and means less alerts in production.

Then, if budget allows, it can be worth using a good on-call and incident management tool. For example, PageDuty, Opsgenie, ServiceNow, Grafana. There are many of them. Because they can give you things like real-time dashboards, observability, easy on-call scheduling, and a lot of them have automated configuration. I’ll use the Terraform example. Again, a lot of them have Terraform providers or any other provider. They’re quite easy to set up and configure.

Psychological Safety

Let’s move on to psychological safety. I’m going to tell you a story. Once upon a time, there was an enthusiastic junior developer. Let’s call them Jo. He was given a task to clean up some old files from a file system. Jo wrote a script. They tested it out. Everything looked good. Jo then realized there were a ton of other similar files in other directories that could be cleaned up. They decided to go above and beyond and they refined the script to search for all directories of a certain type. They tested it locally. Everything looked good to go. They ran the script against the internal file system and all of the expected files were deleted. They checked the file system and saw there was tons of space. They gave themselves a pat on the back for a job well done.

However, suddenly other people in the office started to mention their files were missing from the file system. Jo decided to double-check their script, just in case. Jo realized that the command to find the directories on the file system to delete files was actually returning an empty string. They were basically running rm -rf /* at the root directory level and deleting everything. Luckily for Jo, they had a supportive manager and they went to tell them what happened. Their manager attempted to restore the files from the latest backup, but unfortunately that failed. The only remaining option was to stop all access to the file system and try and salvage what was left, and then restore from a previous day’s backup. As at this time, most people were using desktop computers and the internal file system for document storage, not much work was done for the rest of the day. The impact was quite small in this case, but obviously in a different scenario, it could have been a lot worse.

What happened to Jo? Jo certainly learnt a lesson. Luckily for Jo, the team were quite proactive. They ran an incident post-mortem to learn from the incident, find the root cause of the problem, and identify any solutions. What was the outcome? It was quite good. They put a plan in place to implement least privilege access and regular backup and restore testing was also put in place. Then Jo, of course, never run a destructive, untested development script in a live environment again. Least privilege access, as I’m sure most of you know, is a cybersecurity best practice where users are given the minimum privilege, if needed, to do a task. What are the main benefits? In general, forcing users to assume a privileged role can be a good reminder to be more cautious. It also protects you as an employee.

In the absence of a sandbox, you know you can test out new tools and scripts without worrying about any destruction of key resources. It also protects the business as they know that only certain people have those privileges to perform destructive tasks. Then, in larger companies, well-defined permissions is good evidence for security auditing, and it provides peace of mind for cybersecurity teams and more centralized functions.

What is psychological safety? The belief you won’t be punished for speaking up with ideas, questions, concerns, or mistakes. Amy Edmondson codified the concept in the book, “The Fearless Organization”. There have been many studies done on psychological safety. One example is Project Aristotle, which was a two-year study by Google to identify the key elements of successful teams. Psychological safety was one of the five components found in high-performing teams by that study. There are lots of workshops and toolkits that can provide proper training and give you more information.

To give an example of some questions you might see in a questionnaire, here are some of the questions from a questionnaire you can take yourself on Amy Edmondson’s website, Fearless Organization Scan. If you make a mistake on this team, it is often held against you. Members of this team can bring up problems and tough issues. People on this team sometimes reject others for being different. It is safe to take a risk on this team. It is difficult to ask other members of this team for help. Working with members of this team, my unique skills and talents are valued and utilized. Then, finally, no one on this team would deliberately act in a way that undermines my efforts.

How did working in a team with good levels of psychological safety help Jo? Jo acknowledged their involvement and shared the root cause of the problem so it could be dealt with as quickly as possible. If Jo hadn’t spoken up, it would probably have taken longer to actually find the root cause and fix it. Jo’s direct manager was approachable and acknowledged there were key learnings and improvements that could be made, and they both actively engaged in that post-mortem to find the solution. It’s always worth considering, if Jo had seen other people being punished for admitting their mistakes, would they have spoken up at all?

Recap

To recap on the key learnings, technology evolves quickly. Here are some links of things that we went through. We ran through general Tech Radars and custom Tech Radars and how they can be useful. We ran through the benefits and some pitfalls of InnerSource. Then, automation, which will save you time and effort in the long run. We touched on CI/CD and considerations for continuous delivery or continuous deployment. Should you use different strategies for different environments and deployment types? Then the advantages of GitOps. Then the demo of the GitHub, Terraform, and AWS setup.

Then, setting clear responsibilities. We ran through the Equal Experts delivery and site reliability solutions. How all services should have runbooks before they go to production. How it’s important to design and implement monitoring and alerting during the development process. Then, finally, how working in a psychologically safe working environment benefits everyone. We ran through some of the questions from that psychological safety questionnaire, and also how blameless incident post-mortems can be helpful in maintaining psychological safety by helping everyone learn from the incidents, finding the root cause of the problem, and also identifying the solutions.

Questions and Answers

Participant: With the move from the copy-paste bit to the GitOps bit, how long did that take? How did you manage that transition?

Allen: That did take probably a few months to a year. We started with development and then moving to production. That was probably the biggest step. Obviously, we had other work priorities as well. It was trying to balance it in between those. Finally, once it was done, obviously, everyone realized the benefits, but it was a slightly painful process.

See more presentations with transcripts